| 深度可分离卷积网络的理论与实战(TF2.0) | 您所在的位置:网站首页 › 深度可分离卷积网络结构 › 深度可分离卷积网络的理论与实战(TF2.0) |

深度可分离卷积网络的理论与实战(TF2.0)

|

1、深度可分离卷积网络的理论

深度可分离卷积是普通卷积操作的一个变种,它可以替代不同卷积,从而构成卷积神经网络。 以精度损失为代价去换取计算量的减少和参数量的减少,从而使得深度可分离卷积网络可以在手机端运行。

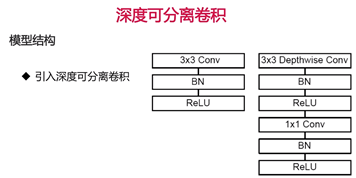

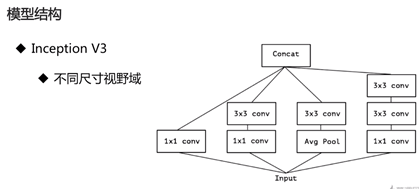

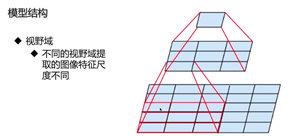

用右图的结构代替左图: 它的好处是,有不同尺寸的视野域, 如下图是Inception V3的结构:从左到右的视野域为:1*1,3*3,6*6,5*5,在这四个分支上有4种不同的视野域,4种不同的视野域也就提取出了4种不同尺寸的图像特征。最后输出的信息就更丰富,效果更好。并且相对于不做分支时会提高效率,减少计算量。

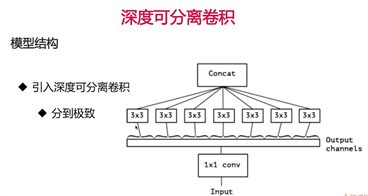

深度可分离卷积也是一种分支的网络结构,只不过它的分支建立在不同通道之上。 输入->1*1卷积 = 多通道输出->在这些通道上建立分支(3个通道,3个分支)->得到不同结果做合并

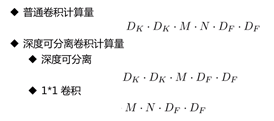

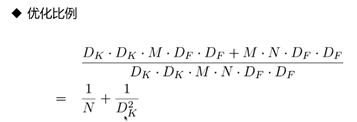

深度可分离卷积相比于普通卷积计算量少很多:DK代表卷积核大小,M代表输入通道数,N代表输出通道数,DF代表输入图像大小

在keras里深度可分离卷积已经被实现了,因此可以像使用Conv2D一样来使用深度可分离卷积。在深度可分离卷积神经网络里面,通常在输入层上使用普通的卷积,在输入层之外的地方使用深度可分离卷积。网络结构如下所示: Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv2d (Conv2D) (None, 28, 28, 32) 320 _________________________________________________________________ separable_conv2d (SeparableC (None, 28, 28, 32) 1344 _________________________________________________________________ max_pooling2d (MaxPooling2D) (None, 14, 14, 32) 0 _________________________________________________________________ separable_conv2d_1 (Separabl (None, 14, 14, 64) 2400 _________________________________________________________________ separable_conv2d_2 (Separabl (None, 14, 14, 64) 4736 _________________________________________________________________ max_pooling2d_1 (MaxPooling2 (None, 7, 7, 64) 0 _________________________________________________________________ separable_conv2d_3 (Separabl (None, 7, 7, 128) 8896 _________________________________________________________________ separable_conv2d_4 (Separabl (None, 7, 7, 128) 17664 _________________________________________________________________ max_pooling2d_2 (MaxPooling2 (None, 3, 3, 128) 0 _________________________________________________________________ flatten (Flatten) (None, 1152) 0 _________________________________________________________________ dense (Dense) (None, 128) 147584 _________________________________________________________________ dense_1 (Dense) (None, 10) 1290 ================================================================= Total params: 184,234 Trainable params: 184,234 Non-trainable params: 0 _________________________________________________________________附代码: import matplotlib as mpl import matplotlib.pyplot as plt %matplotlib inline import numpy as np import sklearn import pandas as pd import os import sys import time import tensorflow as tf from tensorflow import keras print(tf.__version__) print(sys.version_info) for module in mpl, np, pd, sklearn, tf, keras: print(module.__name__, module.__version__) fashion_mnist = keras.datasets.fashion_mnist (x_train_all, y_train_all), (x_test, y_test) = fashion_mnist.load_data() x_valid, x_train = x_train_all[:5000], x_train_all[5000:] y_valid, y_train = y_train_all[:5000], y_train_all[5000:] print(x_valid.shape, y_valid.shape) print(x_train.shape, y_train.shape) print(x_test.shape, y_test.shape) from sklearn.preprocessing import StandardScaler scaler = StandardScaler() x_train_scaled = scaler.fit_transform( x_train.astype(np.float32).reshape(-1, 1)).reshape(-1, 28, 28, 1) x_valid_scaled = scaler.transform( x_valid.astype(np.float32).reshape(-1, 1)).reshape(-1, 28, 28, 1) x_test_scaled = scaler.transform( x_test.astype(np.float32).reshape(-1, 1)).reshape(-1, 28, 28, 1) model = keras.models.Sequential() model.add(keras.layers.Conv2D(filters=32, kernel_size=3, padding='same', activation='selu', input_shape=(28, 28, 1))) model.add(keras.layers.SeparableConv2D(filters=32, kernel_size=3, padding='same', activation='selu')) model.add(keras.layers.MaxPool2D(pool_size=2)) model.add(keras.layers.SeparableConv2D(filters=64, kernel_size=3, padding='same', activation='selu')) model.add(keras.layers.SeparableConv2D(filters=64, kernel_size=3, padding='same', activation='selu')) model.add(keras.layers.MaxPool2D(pool_size=2)) model.add(keras.layers.SeparableConv2D(filters=128, kernel_size=3, padding='same', activation='selu')) model.add(keras.layers.SeparableConv2D(filters=128, kernel_size=3, padding='same', activation='selu')) model.add(keras.layers.MaxPool2D(pool_size=2)) model.add(keras.layers.Flatten()) model.add(keras.layers.Dense(128, activation='selu')) model.add(keras.layers.Dense(10, activation="softmax")) model.compile(loss="sparse_categorical_crossentropy", optimizer = "sgd", metrics = ["accuracy"]) model.summary() logdir = os.path.join("separable-cnn-selu-callbacks") if not os.path.exists(logdir): os.mkdir(logdir) output_model_file = os.path.join(logdir, "fashion_mnist_model.h5") callbacks = [ keras.callbacks.TensorBoard(logdir), keras.callbacks.ModelCheckpoint(output_model_file, save_best_only = True), keras.callbacks.EarlyStopping(patience=5, min_delta=1e-3), ] history = model.fit(x_train_scaled, y_train, epochs=10, validation_data=(x_valid_scaled, y_valid), callbacks = callbacks) def plot_learning_curves(history): pd.DataFrame(history.history).plot(figsize=(8, 5)) plt.grid(True) plt.gca().set_ylim(0, 3) plt.show() plot_learning_curves(history) model.evaluate(x_test_scaled, y_test, verbose = 0)

|

【本文地址】

公司简介

联系我们