python |

您所在的位置:网站首页 › 重庆链家二手房信息网 › python |

python

|

本文讲解的是scrapy框架爬虫的实例 文章目录 前言scrapy简介1.scrapy框架的流程2.流程简介 操作1.创建scrapy项目2.运行3.代码部分 前言本文爬取的是链家重庆主城九区的二手房数据 同时将爬取的数据存入mysql数据库中。 scrapy简介 1.scrapy框架的流程

接着按着他的提示走。 参考 scrapy官方文档. 可以翻译成中文哦 终端运行 scrapy crawl (项目名) 3.代码部分main.py # -*- coding: utf-8 -*- import scrapy import re from lxml import etree from scrapy.spiders import CrawlSpider, Rule from scrapy.linkextractors import LinkExtractor import time import random from ..items import PythonQmItem class LianjiaSpider(scrapy.Spider): name = 'lianjia' allowed_domains = ['lianjia.com'] #主城9区 渝中区、大渡口区、江北区、沙坪坝区、九龙坡区、南岸区、北碚区、渝北区、巴南区 start_urls = [] list_x = ['yuzhong','dadukou','jiangbei','shapingba','jiulongpo','nanan','beibei','yubei','banan'] for j in list_x: for i in range(1, 80): url1 = 'https://cq.lianjia.com/ershoufang/{}/pg{}/'.format(j,i) start_urls.append(url1) rules = (Rule(LinkExtractor(allow=r'book/\d+'), callback="parse")) def parse(self, response): div_list = response.xpath('//ul[@class="sellListContent"]/li') for i in div_list: item = PythonQmItem() # 详情页链接 item['link'] = i.xpath('./div[@class="info clear"]/div[@class="title"]/a/@href').extract_first() # 名称 item['name'] = i.xpath('./div[@class="info clear"]/div[@class="title"]/a/text()').extract_first() # 多少人关注以及多久前发布的 item['follow_release'] = i.xpath('./div[@class="info clear"]/div[@class="followInfo"]/text()').extract_first() # 地址 item['small_add'] = i.xpath('./div[@class="info clear"]/div[@class="flood"]/div/a[1]/text()').extract_first() # 地区 item['big_add'] = i.xpath('./div[@class="info clear"]/div[@class="flood"]/div/a[2]/text()').extract_first() # 房屋信息 item['houseInfo'] = i.xpath('./div[@class="info clear"]/div[@class="address"]/div/text()').extract_first() # 价格 item['totalPrice'] = i.xpath( './div[@class="info clear"]/div[@class="priceInfo"]/div[1]/span/text()').extract_first() # 每平米价格 item['Price_per_square_meter'] = i.xpath( './div[@class="info clear"]/div[@class="priceInfo"]/div[2]/span/text()').extract_first() # print(item['name']) yield scrapy.Request(item['link'], callback=self.parse_detail, meta={'item': item}) def parse_detail(self, response): item = response.meta['item'] #房屋id item['ID'] = response.xpath('/html/body/div[5]/div[2]/div[5]/div[4]/span[2]/text()').extract() #所属地区 item['areaName'] = response.xpath('//div[@class="aroundInfo"]/div[@class="areaName"]/span[@class="info"]/a[1]/text()').extract() #房屋朝向 item['orientation'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[7]/text()').extract() #房屋类型 item['type'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[1]/text()').extract() #所在楼层 item['Floor'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[2]/text()').extract() #装修情况 item['Decoration'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[9]/text()').extract() #套内面积 item['Inside_area'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[5]/text()').extract() #是否配备电梯 item['elevator'] = response.xpath('//*[@id="introduction"]/div/div/div[1]/div[2]/ul/li[11]/text()').extract() # 挂牌时间 item['Listing_time'] = response.xpath('//*[@id="introduction"]/div/div/div[2]/div[2]/ul/li[1]/span[2]/text()').extract() # 建筑年代 item['building_age'] = response.xpath('//div[@class="xiaoqu_main fl"]/div[2]/span[@class="xiaoqu_main_info"]/text()').extract() #小区的均价 village_avg = response.xpath('//*[@id="resblockCardContainer"]/div/div/div[2]/div/div[1]/span/text()').extract() item['village_avg'] = re.sub("\s","", ",".join(village_avg)) #交易权属 商品房、别墅等等 item['T_ownership'] = response.xpath('//*[@id="introduction"]/div/div/div[2]/div[2]/ul/li[2]/span[2]/text()').extract() time.sleep(random.randint(1, 3)) yield itemitems.py import scrapy class PythonQmItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() # 名称 name = scrapy.Field() # 链接 link = scrapy.Field() # 多少人关注以及多久前发布的 follow_release = scrapy.Field() # 小地址 small_add = scrapy.Field() # 大地址 big_add = scrapy.Field() # 房子情况 houseInfo = scrapy.Field() # 总价格 totalPrice = scrapy.Field() # 每平米价格 Price_per_square_meter = scrapy.Field() #id ID = scrapy.Field() # 所属地区 areaName = scrapy.Field() # 房屋朝向 orientation = scrapy.Field() # 房屋类型 type = scrapy.Field() # 所在楼层 Floor = scrapy.Field() # 装修情况 Decoration = scrapy.Field() # 套内面积 Inside_area = scrapy.Field() # 是否配备电梯 elevator = scrapy.Field() # 挂牌时间 Listing_time = scrapy.Field() # 建筑年代 building_age = scrapy.Field() # 小区的均价 village_avg = scrapy.Field() # 交易权属 T_ownership = scrapy.Field()pipelines.py import pymysql def dbHandle(): conn = pymysql.connect(host='localhost',user='root',password='123456',db='house_2') return conn class PythonQmPipeline(object): def process_item(self, item, spider): print(item) dbobject = dbHandle() cursor = dbobject.cursor() cursor.execute("USE house_2") # 插入数据库 sql = 'insert into house_2.ef(name,link,follow_release,small_add,big_add,houseInfo,totalPrice,Price_per_square_meter,areaName,ID,orientation,type,Floor,Decoration,Inside_area,elevator,Listing_time,T_ownership) values (%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)' try: cursor.execute(sql, ( item['name'], item['link'], item['follow_release'], item['small_add'], item['big_add'], item['houseInfo'], item['totalPrice'], item['Price_per_square_meter'], item['areaName'], item['ID'], item['orientation'], item['type'], item['Floor'], item['Decoration'], item['Inside_area'], item['elevator'], item['Listing_time'], item['T_ownership'])) cursor.connection.commit() except BaseException as e: print("错误出现:", e) dbobject.rollback() print(item) return itemsettings.py

github源代码. 包括数据分析,模型建立部分 干饭,冲冲冲 |

【本文地址】

公司简介

联系我们

今日新闻 |

点击排行 |

|

推荐新闻 |

图片新闻 |

|

专题文章 |

谷歌翻译救我老命,啊哈哈。

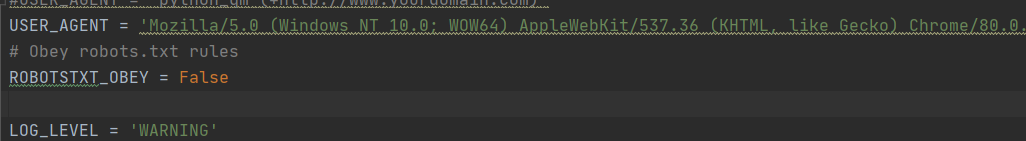

谷歌翻译救我老命,啊哈哈。 USER_AGENT:请求头可以添加,你也可以改成请求头池 ROBOTSTXT_OBEY:你是否遵守爬虫协议,emmm,好吧,我们不遵守。 LOG_LEVEL:日志水平,只显示warning以上的日志信息: CRITICAL(严重错误信息) ERROR(错误信息) WARNING(警告信息) INFO(一般信息) DEBUG(调试信息) 更多可参考: 日志记录.

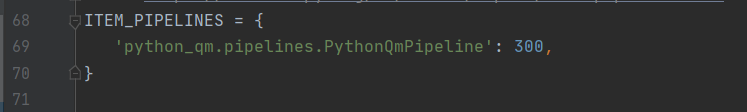

USER_AGENT:请求头可以添加,你也可以改成请求头池 ROBOTSTXT_OBEY:你是否遵守爬虫协议,emmm,好吧,我们不遵守。 LOG_LEVEL:日志水平,只显示warning以上的日志信息: CRITICAL(严重错误信息) ERROR(错误信息) WARNING(警告信息) INFO(一般信息) DEBUG(调试信息) 更多可参考: 日志记录. 包含要使用的项目管道及其顺序的字典。顺序值是任意的,但习惯上将它们定义在 0-1000 范围内。较低的订单在较高的订单之前处理。 300这个数字可以修改 当然这个ITEM_PIPELINES页可以添加 优先级的话,是更具数字进行的,数字越大,越后执行

包含要使用的项目管道及其顺序的字典。顺序值是任意的,但习惯上将它们定义在 0-1000 范围内。较低的订单在较高的订单之前处理。 300这个数字可以修改 当然这个ITEM_PIPELINES页可以添加 优先级的话,是更具数字进行的,数字越大,越后执行 结束结束结束!!!

结束结束结束!!!