python爬虫 |

您所在的位置:网站首页 › 意大利旅游景点排名前十 › python爬虫 |

python爬虫

|

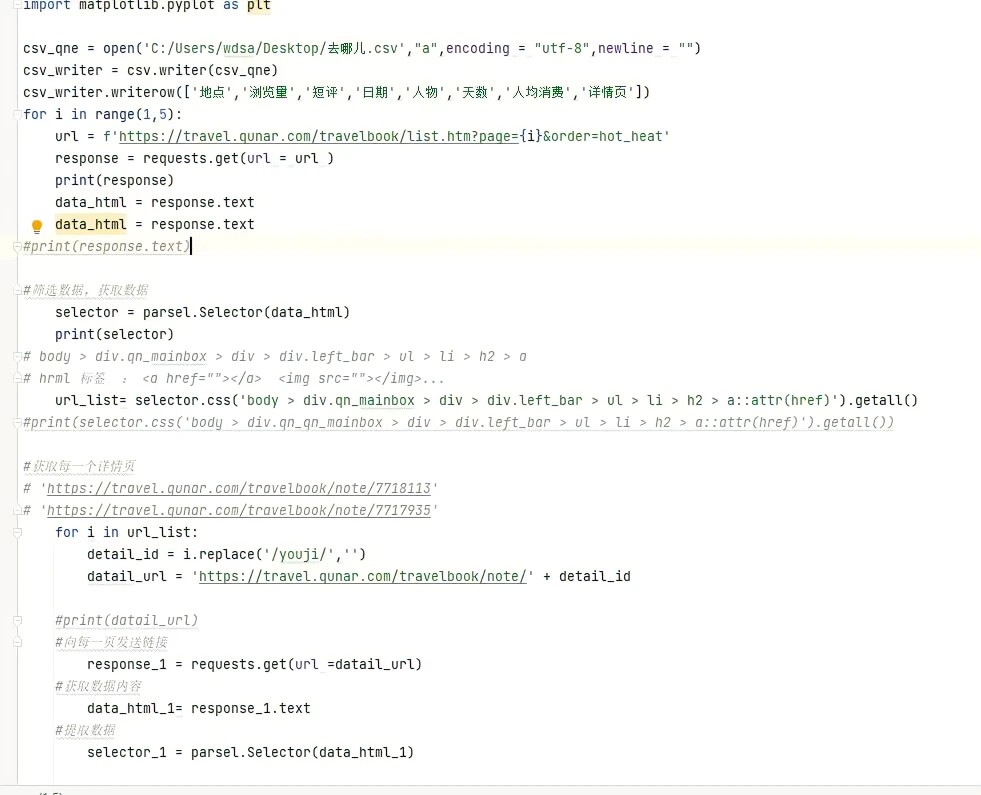

#筛选数据 import parsel import csv import time import random import pandas import matplotlib.pyplot as plt from pyecharts import Map import jieba from wordcloud import WordCloud, STOPWORDS, ImageColorGenerator from PIL import Image from os import path ##数据可视化 import matplotlib.pyplot as plt 2.数据的抓取

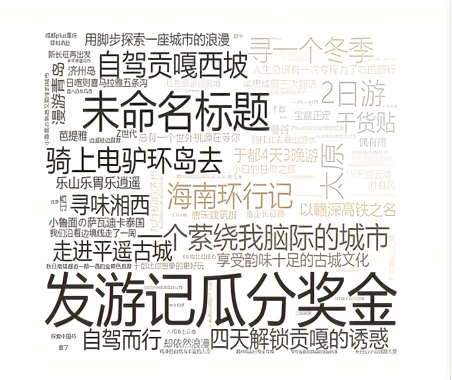

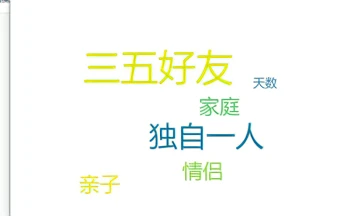

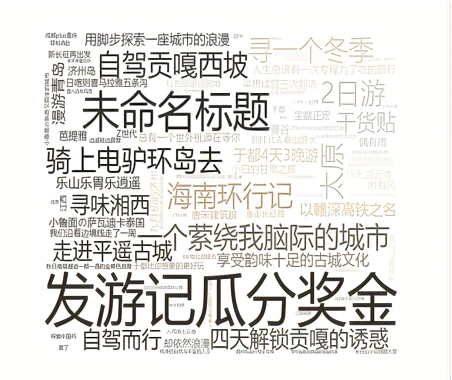

3.主题云词图 list_all = [] text = '' with open('C:/Users/wdsa/Desktop/去哪儿.csv', 'r', encoding='utf8') as file: t = file.read() file.close() for i in title_list: if type(i) == float: pass else: list_all.append(i) txt = " ".join(list_all) backgroud_Image = plt.imread('C:/Users/wdsa/Desktop/阳光.jpg') print('加载图片成功!') w = WordCloud( font_path="msyh.ttc", width=1000, height=800, background_color="white", stopwords=STOPWORDS, max_font_size=150 ) w.generate(txt) print('开始加载文本') img_colors = ImageColorGenerator(backgroud_Image) w.recolor(color_func=img_colors) plt.imshow(w) plt.axis('off') plt.show() d = path.dirname(__file__) # w.to_file(d,"C:/Users/wdsa/Desktop/wordcloud.jpg")!!!!!!!!!!! print('生成词云成功!')

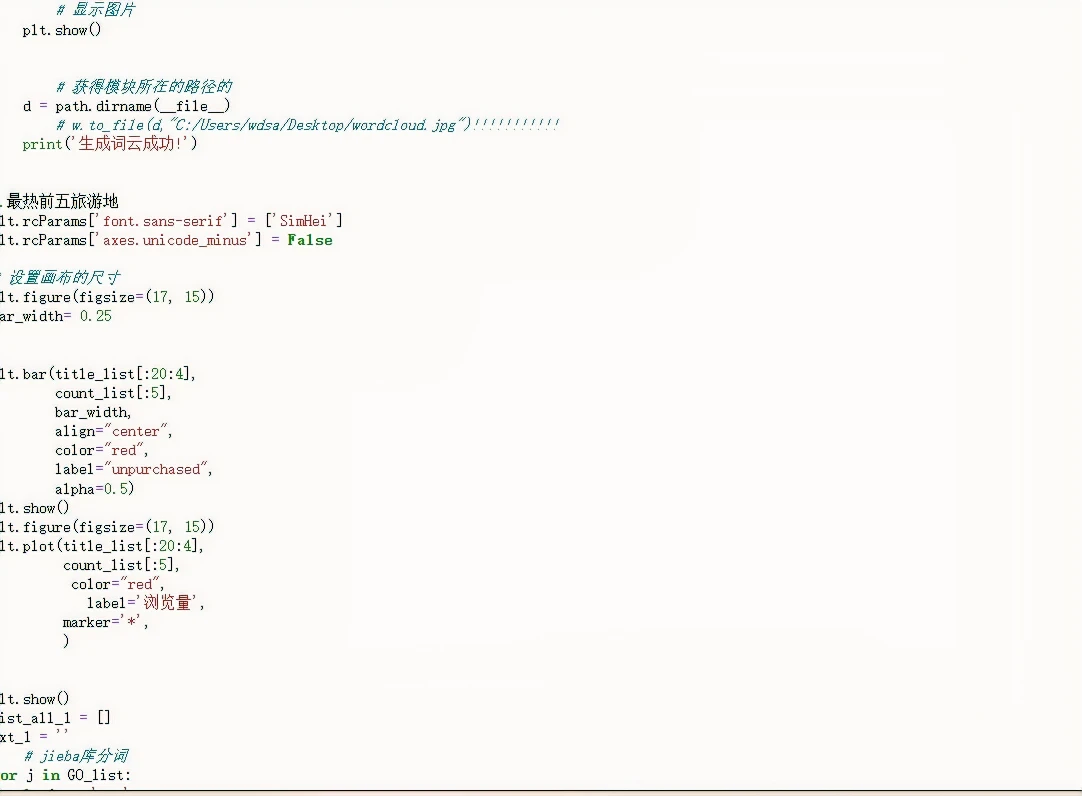

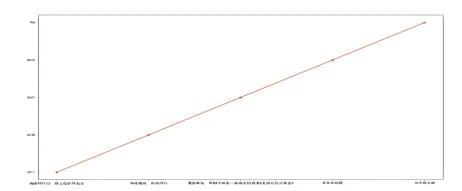

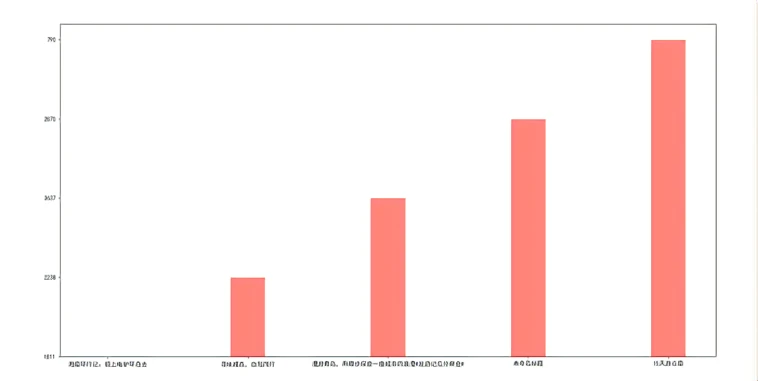

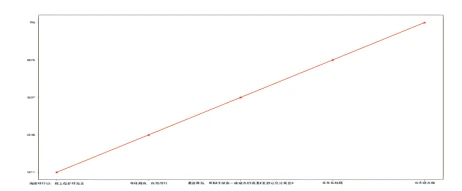

4.爬取浏览量前五的主题

6.出行方式云词图 list_all_1 = [] txt_1 = '' for j in GO_list: if i == 'nan': pass elif type(j) == float: pass else: list_all_1.append(j) txt_1 = " ".join(list_all_1) backgroud_Image = plt.imread('C:/Users/wdsa/Desktop/阳光.jpg') print('加载图片成功!') pose= WordCloud( font_path="msyh.ttc", width=1000, height=800, background_color="white", stopwords=STOPWORDS, max_font_size=150, ) pose.generate(txt_1) print('开始加载文本') img = ImageColorGenerator(backgroud_Image) w.recolor(color_func=img) plt.imshow(pose) plt.axis('off') plt.show() d = path.dirname(__file__) print('生成词云成功!')

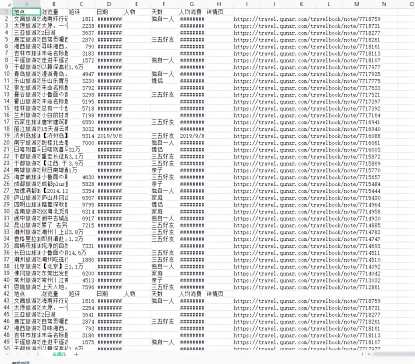

完整代码 import random import time import pandas as pd import requests import parsel import csv import time import random import pandas import matplotlib.pyplot as plt from pyecharts import Map import jieba from wordcloud import WordCloud, STOPWORDS, ImageColorGenerator from PIL import Image from os import path import matplotlib.pyplot as plt csv_qne = open('C:/Users/wdsa/Desktop/去哪儿.csv',"a",encoding = "utf-8",newline = "") csv_writer = csv.writer(csv_qne) csv_writer.writerow(['地点','浏览量','短评','日期','人物','天数','人均消费','详情页']) for i in range(1,5): url = f'https://travel.qunar.com/travelbook/list.htm?page={i}&order=hot_heat' response = requests.get(url = url ) print(response) data_html = response.text data_html = response.text selector = parsel.Selector(data_html) print(selector) for i in url_list: detail_id = i.replace('/youji/','') datail_url = 'https://travel.qunar.com/travelbook/note/' + detail_id response_1 = requests.get(url =datail_url) data_html_1= response_1.text selector_1 = parsel.Selector(data_html_1) title = selector_1.css('.b_crumb_cont *:nth-child(3)::text').get() comment = selector_1.css('.title.white::text').get() count = selector_1.css('.view_count::text').get() data = selector_1.css('#js_mainleft > div.b_foreword > ul > li.f_item.when > p > span.data::text').get() days = selector_1.css('#js_mainleft > div.b_foreword > ul > li.f_item.howloog > p > span.data::text').get() character = selector_1.css('#js_mainleft > div.b_foreword > ul > li.f_item.howlong > p > span.data::text').get() money = selector_1.css('#js_mainleft > div.b_foreword > ul > li.f_item.who > p > span.data::text').get() play_list = selector_1.css('#js_mainleft > dix.b_foreword > ul > li.f_item.how > p > span.data::text').get() csv_writer.writerow([title,comment,count,data,days,money,data,play_list,datail_url]) time.sleep(1) csv_qne.close() title_list = [] speake = [] happer_day = [] count_list = [] days_list = [ GO_list = [] meony_list = [] url_list_to = [] af= pd.read_csv('C:/Users/wdsa/Desktop/去哪儿.csv') for i in af['地点']: title_list.append(i) for i in af['短评']: speake.append(i) for i in af['浏览量']: count_list.append(i) for i in af['日期']: days_list.append(i) for i in af['天数']: happer_day.append(i) for i in af['人物']: GO_list.append(i) for i in af['人均消费']: meony_list.append(i) for i in af['详情页']: url_list_to.append(i) df =pd.DataFrame(af) list_all = [] text = '' with open('C:/Users/wdsa/Desktop/去哪儿.csv', 'r', encoding='utf8') as file: t = file.read() file.close() for i in title_list: if type(i) == float: pass else: list_all.append(i) txt = " ".join(list_all) backgroud_Image = plt.imread('C:/Users/wdsa/Desktop/阳光.jpg') print('加载图片成功!') w = WordCloud( font_path="msyh.ttc", width=1000, height=800, background_color="white", stopwords=STOPWORDS, max_font_size=150, ) w.generate(txt) print('开始加载文本') img_colors = ImageColorGenerator(backgroud_Image) w.recolor(color_func=img_colors) plt.imshow(w) plt.axis('off') plt.show() d = path.dirname(__file__) print('生成词云成功!') plt.rcParams['font.sans-serif'] = ['SimHei'] plt.rcParams['axes.unicode_minus'] = False plt.figure(figsize=(17, 15)) bar_width= 0.25 plt.bar(title_list[:20:4], count_list[:5], bar_width, align="center", color="red", label="unpurchased", alpha=0.5) plt.show() plt.figure(figsize=(17, 15)) plt.plot(title_list[:20:4], count_list[:5], color="red", label='浏览量', marker='*', ) plt.show() list_all_1 = [] txt_1 = '' for j in GO_list: if i == 'nan': pass elif type(j) == float: pass else: list_all_1.append(j) txt_1 = " ".join(list_all_1) backgroud_Image = plt.imread('C:/Users/wdsa/Desktop/阳光.jpg') print('加载图片成功!') pose= WordCloud( font_path="msyh.ttc", width=1000, height=800, background_color="white", stopwords=STOPWORDS, max_font_size=150, ) pose.generate(txt_1) print('开始加载文本') img = ImageColorGenerator(backgroud_Image) w.recolor(color_func=img) plt.imshow(pose) plt.axis('off') plt.show() d = path.dirname(__file__) # w.to_file(d,"C:/Users/wdsa/Desktop/wordcloud.jpg")!!!!!!!!!!! print('生成词云成功!')

总结 综上所有数据可知,我们用去哪儿网对于国内旅游城市进行了一定的分析以及排名,让人们出游有更加合理的选择,更体现国内疫情解封后每个城市旅行的情况

|

【本文地址】

今日新闻 |

点击排行 |

|

推荐新闻 |

图片新闻 |

|

专题文章 |