|

文章目录

一、背景二、标记1.标记骨骼特征1)剔除不必要点2)特征

三、实现1.获取关键点坐标2.计算特征1)距离2)角度

3.演示4.完整代码

一、背景

我的环境是:Windows10 + python3.7 + anaconda3 + jupyter5.6.0

安装 Openpose开源库时,有点费力,在前面一篇文章中讲述过,这里不再重复;前文有模型下载链接。

本次涉及的模型有:hand和pose-coco模型

手部:22个关键点(21个骨骼点,第22个表示背景),骨骼:coco模型18个特征点    前文讲述了安装和基本使用方法,本文来将骨骼和手势融合在一张图片中同时检测并标记。 前文讲述了安装和基本使用方法,本文来将骨骼和手势融合在一张图片中同时检测并标记。

二、标记

1.标记骨骼特征

1)剔除不必要点

上一次是基于危险驾驶的探讨:  本次我需要手语图像识别,那么这里需要标记的上半身参考点的关键信息更多了。 本次我需要手语图像识别,那么这里需要标记的上半身参考点的关键信息更多了。

图中是我训练时的截图,可以发现手语所表达的肢体动作均在上半身,所以我们参考点可忽略(点9,10,12,13) 。  (图片参考于:中国手语数据集DEVISIGN 数据集以及手语公益网站视频) (图片参考于:中国手语数据集DEVISIGN 数据集以及手语公益网站视频)

2)特征

图像识别,需要以上流程,目前我们在(特征提取和选择阶段),提取哪些特征是有价值的特征呢? 图像识别,需要以上流程,目前我们在(特征提取和选择阶段),提取哪些特征是有价值的特征呢?

示例:

危险驾驶判断标准:

抽烟:可以用双手到鼻的距离来进行判断,即点4,7到点0的距离。接电话:可以计算右手到右耳的距离(左手倒左耳的距离)来进行判断,即点4到点16的距离(点7到点17的距离)。  基于普通摄像头的太极姿势识别 太极姿势分为23式,原作者根据需求提取手臂姿势、腿部姿势: 原作者笔记:十五组人体姿态骨骼关键点之间的距离作为特征,同时选择 了十五组夹角作为角度特征整合到一个数组中去。 原作者笔记:十五组人体姿态骨骼关键点之间的距离作为特征,同时选择 了十五组夹角作为角度特征整合到一个数组中去。  为什么需要角度作为辅助判断参数呢? 为什么需要角度作为辅助判断参数呢? 仅选择距离判断因素,当人离摄像头更远一些,人就会缩小,距离会变短;人靠近摄像头,距离会变大,距离因素远远不够。 通过引入角度信息,我们都知道:一条直线不能确定其方向和大小,若是一个向量(有方向有大小的定点线段),那么这就可以在图像表示唯一。所以,这里是通过角度来约束距离。 引入角度有哪些弊端呢?

出自太极手势识别: 组合特征:距离和角度,能够提高复杂关系的拟合能力,同时由于有些角度在某些情况下的值为零值,比如 2-3-4(整个手臂:手,胳膊肘,肩膀之间的夹角的余弦值)夹角为直角的时候余弦值是零值,当 OpenPose 丢失一个点比如说 4 号位置手的信息没有检测出来的时候,返回零值,它的余弦值就和肩膀之间的夹角的余弦值)夹角为直角的时候余弦值相同了,因此仅仅依据距离信息有时候也会对数据训练产生一定的误导作用,所以就按照距离约束角度,角度约束距离的设计思路设计了独特的太极姿态识别的训练数据。

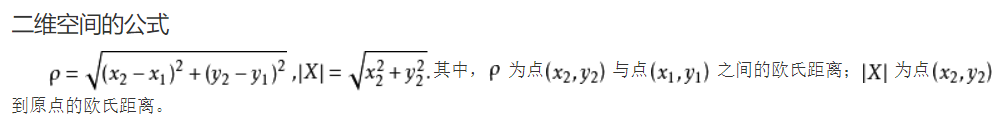

通过上述的分析,我们暂且提炼出25组关键特征,如图:  现在特征点也规划好了,上代码——在其中连线,圈圈画画,计算长度角度。 现在特征点也规划好了,上代码——在其中连线,圈圈画画,计算长度角度。

三、实现

1.获取关键点坐标

主要还是参考前一文:Openpose驾驶员危险驾驶检测(抽烟打电话)

前文是单独提取骨骼特征点和手势特征点,这里对其进行一个融合,思路是:

传入原图像,获取骨骼的coco模型18特征点  手势特征点:通过骨骼特征点point[4]和point[7]标注出两只手首的位置,以手首为中心,取小臂长范围对图片进行分割;再别对两个图片进行识别。  手势检测: 手势检测:  (当然这张图片里的dancer戴了手套,会影响手势部分的检测。) 此部分代码: 1. 在裁剪的时候注意左右相反的,我一开始弄错了,裁剪框老不对 rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1] 2. 注意判断图像中没有显示左手和右手的情况,以及标注框范围过大时,只裁取到图像边缘: (当然这张图片里的dancer戴了手套,会影响手势部分的检测。) 此部分代码: 1. 在裁剪的时候注意左右相反的,我一开始弄错了,裁剪框老不对 rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1] 2. 注意判断图像中没有显示左手和右手的情况,以及标注框范围过大时,只裁取到图像边缘: def getHandROI(self,imgfile,bonepoints):

"""hand手部感兴趣的区域寻找到双手图像

:param 图像路径,骨骼关键点

:return 左手关键点,右手关键点坐标集合

"""

img_cv2 = cv2.imread(imgfile)#原图像

img_height, img_width, _ = img_cv2.shape

rimg = img_cv2.copy()#图像备份

limg = img_cv2.copy()

# 以右手首为中心,裁剪长度为小臂长的图片

if bonepoints[4] and bonepoints[3]:#右手

h = int(self.__distance(bonepoints[4],bonepoints[3]))#小臂长

x_center = bonepoints[4][0]

y_center = bonepoints[4][1]

x1 = x_center-h

y1 = y_center-h

x2 = x_center+h

y2 = y_center+h

print(x1,x2,x_center,y_center,y1,y2)

if x1img_width:

x2 = img_width

if y1img_height:

y2 = img_height

rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1]

if bonepoints[7] and bonepoints[6]:#左手

h = int(self.__distance(bonepoints[7],bonepoints[6]))#小臂长

x_center = bonepoints[7][0]

y_center = bonepoints[7][1]

x1 = x_center-h

y1 = y_center-h

x2 = x_center+h

y2 = y_center+h

print(x1,x2,x_center,y_center,y1,y2)

if x1img_width:

x2 = img_width

if y1img_height:

y2 = img_height

limg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1]

plt.figure(figsize=[10, 10])

plt.subplot(1, 2, 1)

plt.imshow(cv2.cvtColor(rimg, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.subplot(1, 2, 2)

plt.imshow(cv2.cvtColor(limg, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.show()

# 分别获取手部特征点

rhandpoints = self.getHandKeypoints(rimg)

lhandpoints = self.getHandKeypoints(limg)

#显示

pose_model.vis_hand_pose(rimg, rhandpoints)

pose_model.vis_hand_pose(limg, lhandpoints)

return rhandpoints,lhandpoints

ok,现在你已经get了手势和骨骼的坐标了

2.计算特征

1)距离

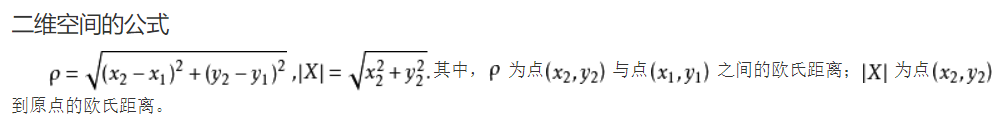

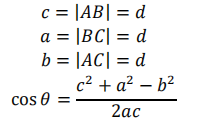

我们图片是二维的,

def __distance(self,A,B):

"""距离辅助函数

:param 两个坐标A(x1,y1)B(x2,y2)

:return 距离d=AB的距离

"""

if A is None or B is None:

return 0

else:

return math.sqrt((A[0]-B[0])**2+(A[1]-B[1])**2)

在openpose返回的关键点坐标中,未识别的关键点返回None;因此,我们这里也要做一个简单的None判断,坐标都没有了,还怎么计算距离呢?🤣

2)角度

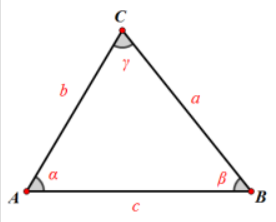

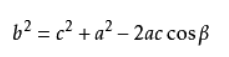

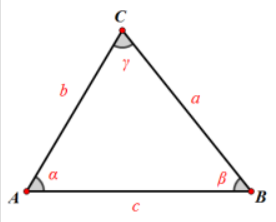

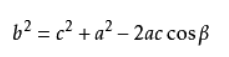

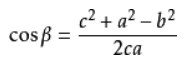

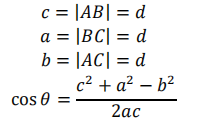

我们之前保留的参考点为三个点:A-B-C式(2-3-4代表右手的手臂角度)

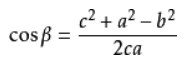

这里采用余弦定理:(a,b,c分别是A,B,C的对边长度)  关于角B的计算公式: 关于角B的计算公式:  、 、 其中,我们计算先根据三个点的坐标信息,计算各边长;再计算余弦的弧度值: 其中,我们计算先根据三个点的坐标信息,计算各边长;再计算余弦的弧度值:  弧度值转角度值:math.degrees(),均可(这里为了我方便观察,我暂时转换了,实际运用时,不用转换,多一份计算,减慢一分运行速度!) 弧度值转角度值:math.degrees(),均可(这里为了我方便观察,我暂时转换了,实际运用时,不用转换,多一份计算,减慢一分运行速度!)

def __myAngle(self,A,B,C):

"""角度辅助函数

:param 三个坐标A(x1,y1)B(x2,y2)C(x3,y3)

:return 角B的余弦值(转换为角度)

"""

if A is None or B is None or C is None:

return 0

else:

a=self.__distance(B,C)

b=self.__distance(A,C)

c=self.__distance(A,B)

if 2*a*c !=0:

return math.degrees(a**2/+c**2-b**2)/(2*a*c)#计算出cos弧度,转换为角度

return 0

根据我之前的25个(距离+角度)信息,存放那个在list中,还是比较好理解。  为了演示,做了部分特征值参数显示,大致就是这个意思,这些数据都需要保存收集,为后面生成模型和识别做准备。 为了演示,做了部分特征值参数显示,大致就是这个意思,这些数据都需要保存收集,为后面生成模型和识别做准备。

3.演示

4.完整代码

#!/usr/bin/python3

#!--*-- coding: utf-8 --*--

from __future__ import division# 精确除法

import cv2

import os

import time

import math

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

plt.rcParams['font.sans-serif']=['SimHei'] #用来正常显示中文标签

plt.rcParams['axes.unicode_minus']=False #用来正常显示负号

class general_pose_model(object):

def __init__(self, modelpath):

# 指定采用的模型

# hand: 22 points(21个手势关键点,第22个点表示背景)

# COCO: 18 points()

self.inWidth = 368

self.inHeight = 368

self.threshold = 0.1

self.pose_net = self.general_coco_model(modelpath)

self.hand_num_points = 22

self.hand_point_pairs = [[0,1],[1,2],[2,3],[3,4],

[0,5],[5,6],[6,7],[7,8],

[0,9],[9,10],[10,11],[11,12],

[0,13],[13,14],[14,15],[15,16],

[0,17],[17,18],[18,19],[19,20]]

self.hand_net = self.get_hand_model(modelpath)

self.MIN_DESCRIPTOR = 32 # surprisingly enough, 2 descriptors are already enough

"""提取骨骼特征点,并可视化显示"""

def general_coco_model(self, modelpath):

self.points_name = {

"Nose": 0, "Neck": 1,

"RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7,

"RHip": 8, "RKnee": 9, "RAnkle": 10,

"LHip": 11, "LKnee": 12, "LAnkle": 13,

"REye": 14, "LEye": 15,

"REar": 16, "LEar": 17,

"Background": 18}

self.bone_num_points = 18

self.bone_point_pairs = [[1, 0], [1, 2], [1, 5],

[2, 3], [3, 4], [5, 6],

[6, 7], [1, 8], [8, 9],

[9, 10], [1, 11], [11, 12],

[12, 13], [0, 14], [0, 15],

[14, 16], [15, 17]]

prototxt = os.path.join(modelpath,"pose/coco/pose_deploy_linevec.prototxt")

caffemodel = os.path.join(modelpath, "pose/coco/pose_iter_440000.caffemodel")

coco_model = cv2.dnn.readNetFromCaffe(prototxt, caffemodel)

return coco_model

def getBoneKeypoints(self, imgfile):

"""COCO身体关键点检测

:param 图像路径

:return 关键点坐标集合

"""

img_cv2 = cv2.imread(imgfile)

img_height, img_width, _ = img_cv2.shape

inpBlob = cv2.dnn.blobFromImage(img_cv2,1.0 / 255,(self.inWidth, self.inHeight),(0, 0, 0), swapRB=False, crop=False)

self.pose_net.setInput(inpBlob)

self.pose_net.setPreferableBackend(cv2.dnn.DNN_BACKEND_OPENCV)

self.pose_net.setPreferableTarget(cv2.dnn.DNN_TARGET_OPENCL)

output = self.pose_net.forward()

H = output.shape[2]

W = output.shape[3]

print("形状:")

print(output.shape)

# vis heatmaps

self.vis_bone_heatmaps(img_file, output)

#

points = []

for idx in range(self.bone_num_points):

probMap = output[0, idx, :, :] # confidence map.

# 提取关键点区域的局部最大值

minVal, prob, minLoc, point = cv2.minMaxLoc(probMap)

# Scale the point to fit on the original image

x = (img_width * point[0]) / W

y = (img_height * point[1]) / H

if prob > self.threshold:

points.append((int(x), int(y)))

else:

points.append(None)

#print(points)

return points

def __distance(self,A,B):

"""距离辅助函数

:param 两个坐标A(x1,y1)B(x2,y2)

:return 距离d=AB的距离

"""

if A is None or B is None:

return 0

else:

return math.sqrt((A[0]-B[0])**2+(A[1]-B[1])**2)

def __myAngle(self,A,B,C):

"""角度辅助函数

:param 三个坐标A(x1,y1)B(x2,y2)C(x3,y3)

:return 角B的余弦值(转换为角度)

"""

if A is None or B is None or C is None:

return 0

else:

a=self.__distance(B,C)

b=self.__distance(A,C)

c=self.__distance(A,B)

if 2*a*c !=0:

return math.degrees(a**2/+c**2-b**2)/(2*a*c)#计算出cos弧度,转换为角度

return 0

def bonepointDistance(self, keyPoint):

"""距离辅助函数

:param keyPoint:

:return:list

:distance:

"""

distance0 = self.__distance(keyPoint[4],keyPoint[8])#右手右腰

distance1 = self.__distance(keyPoint[7],keyPoint[11])#左手左腰

distance2 = self.__distance(keyPoint[2],keyPoint[4])#手肩

distance3 = self.__distance(keyPoint[5],keyPoint[7])

distance4 = self.__distance(keyPoint[0],keyPoint[4])#头手

distance5 = self.__distance(keyPoint[0],keyPoint[7])

distance6 = self.__distance(keyPoint[4],keyPoint[7])#两手

distance7 = self.__distance(keyPoint[4],keyPoint[16])#手耳

distance8 = self.__distance(keyPoint[7],keyPoint[17])

distance9 = self.__distance(keyPoint[4],keyPoint[14])#手眼

distance10 = self.__distance(keyPoint[7],keyPoint[15])

distance11 = self.__distance(keyPoint[4],keyPoint[1])#手脖

distance12 = self.__distance(keyPoint[7],keyPoint[1])

distance13 = self.__distance(keyPoint[4],keyPoint[5])#左手左臂

distance14 = self.__distance(keyPoint[4],keyPoint[6])#右手左肩

distance15 = self.__distance(keyPoint[7],keyPoint[2])#右手左肩

distance16 = self.__distance(keyPoint[7],keyPoint[3])#左手右臂

return [distance0, distance1, distance2, distance3, distance4, distance5, distance6, distance7,distance8,

distance9, distance10, distance11, distance12, distance13, distance14, distance15, distance16]

def bonepointAngle(self, keyPoint):

"""角度辅助函数

:param keyPoint:

:return:list

:角度:

"""

angle0 = self.__myAngle(keyPoint[2], keyPoint[3], keyPoint[4])#右手臂夹角

angle1 = self.__myAngle(keyPoint[5], keyPoint[6], keyPoint[7])#左手臂夹角

angle2 = self.__myAngle(keyPoint[3], keyPoint[2], keyPoint[1])#右肩夹角

angle3 = self.__myAngle(keyPoint[6], keyPoint[5], keyPoint[1])

angle4 = self.__myAngle(keyPoint[4], keyPoint[0], keyPoint[7])#头手头

if keyPoint[8] is None or keyPoint[11] is None:

angle5 = 0

else:

temp = ((keyPoint[8][0]+keyPoint[11][0])/2,(keyPoint[8][1]+keyPoint[11][1])/2)#两腰的中间值

angle5 = self.__myAngle(keyPoint[4], temp, keyPoint[7])#手腰手

angle6 = self.__myAngle(keyPoint[4], keyPoint[1], keyPoint[8])#右手脖腰

angle7 = self.__myAngle(keyPoint[7], keyPoint[1], keyPoint[11])#右手脖腰

return [angle0, angle1, angle2, angle3, angle4, angle5, angle6, angle7]

def vis_bone_pose(self,imgfile,points):

"""显示标注骨骼点后的图像

:param 图像路径,COCO检测关键点坐标

"""

img_cv2 = cv2.imread(imgfile)

img_cv2_copy = np.copy(img_cv2)

for idx in range(len(points)):

if points[idx]:

cv2.circle(img_cv2_copy, points[idx], 5, (0, 255, 255), thickness=-1,lineType=cv2.FILLED)

cv2.putText(img_cv2_copy, "{}".format(idx), points[idx], cv2.FONT_HERSHEY_SIMPLEX,1,(0, 0, 255),4, lineType=cv2.LINE_AA)

h = int(self.__distance(points[4],points[3]))#小臂周长

if points[4]:

x_center = points[4][0]

y_center = points[4][1]

cv2.rectangle(img_cv2, (x_center-h, y_center-h), (x_center+h, y_center+h), (255, 0, 0), 2)#框

cv2.circle(img_cv2,(x_center, y_center), 3, (0, 0, 255), thickness=-1,lineType=cv2.FILLED)#坐标点

cv2.putText(img_cv2,"%d,%d" % (x_center,y_center),(x_center, y_center), cv2.FONT_HERSHEY_SIMPLEX,

0.6, (0, 0, 255), 2, lineType=cv2.LINE_AA)#右手首

if points[7]:

x_center = points[7][0]

y_center = points[7][1]

cv2.rectangle(img_cv2, (x_center-h, y_center-h), (x_center+h, y_center+h), (255, 0, 0), 2)

cv2.putText(img_cv2,"%d,%d" % (x_center,y_center),(x_center, y_center), cv2.FONT_HERSHEY_SIMPLEX,

0.6, (0, 0, 255), 2, lineType=cv2.LINE_AA)#左手首

cv2.circle(img_cv2,(x_center-h, y_center-h), 3, (225, 225, 255), thickness=-1,lineType=cv2.FILLED)#对角点

cv2.putText(img_cv2, "{}".format(x_center-h),(x_center-h, y_center-h), cv2.FONT_HERSHEY_SIMPLEX,

0.6, (255, 0, 0), 2, lineType=cv2.LINE_AA)

cv2.circle(img_cv2,(x_center+h, y_center+h), 3, (225, 225, 255), thickness=-1,lineType=cv2.FILLED)

cv2.putText(img_cv2, "{}".format(x_center+h),(x_center+h, y_center+h), cv2.FONT_HERSHEY_SIMPLEX,

0.6, (255, 0, 0), 2, lineType=cv2.LINE_AA)#对角点

# 骨骼连线

for pair in self.bone_point_pairs:

partA = pair[0]

partB = pair[1]

if points[partA] and points[partB]:

cv2.line(img_cv2, points[partA], points[partB], (0, 255, 255), 3)

cv2.circle(img_cv2, points[partA],4, (0, 0, 255),thickness=-1, lineType=cv2.FILLED)

plt.figure(figsize=[10, 10])

plt.subplot(1, 2, 1)

plt.imshow(cv2.cvtColor(img_cv2, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.subplot(1, 2, 2)

plt.imshow(cv2.cvtColor(img_cv2_copy, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.show()

def vis_bone_heatmaps(self, imgfile, net_outputs):

"""显示骨骼关键点热力图

:param 图像路径,神经网络

"""

img_cv2 = cv2.imread(imgfile)

plt.figure(figsize=[10, 10])

for pdx in range(self.bone_num_points):

probMap = net_outputs[0, pdx, :, :]#全部heatmap都初始化为0

probMap = cv2.resize(probMap,(img_cv2.shape[1], img_cv2.shape[0]))

plt.subplot(5, 5, pdx+1)

plt.imshow(cv2.cvtColor(img_cv2, cv2.COLOR_BGR2RGB))# background

plt.imshow(probMap, alpha=0.6)

plt.colorbar()

plt.axis("off")

plt.show()

"""提取手势图像(在骨骼基础上定位左右手图片),handpose特征点,并可视化显示"""

def get_hand_model(self, modelpath):

prototxt = os.path.join(modelpath, "hand/pose_deploy.prototxt")

caffemodel = os.path.join(modelpath, "hand/pose_iter_102000.caffemodel")

hand_model = cv2.dnn.readNetFromCaffe(prototxt, caffemodel)

return hand_model

def getOneHandKeypoints(self, handimg):

"""hand手部关键点检测(单手)

:param 手部图像路径,手部关键点

:return 单手关键点坐标集合

"""

img_height, img_width, _ = handimg.shape

aspect_ratio = img_width / img_height

inWidth = int(((aspect_ratio * self.inHeight) * 8) // 8)

inpBlob = cv2.dnn.blobFromImage(handimg, 1.0 / 255, (inWidth, self.inHeight), (0, 0, 0), swapRB=False, crop=False)

self.hand_net.setInput(inpBlob)

output = self.hand_net.forward()

# vis heatmaps

self.vis_hand_heatmaps(handimg, output)

#

points = []

for idx in range(self.hand_num_points):

probMap = output[0, idx, :, :] # confidence map.

probMap = cv2.resize(probMap, (img_width, img_height))

# Find global maxima of the probMap.

minVal, prob, minLoc, point = cv2.minMaxLoc(probMap)

if prob > self.threshold:

points.append((int(point[0]), int(point[1])))

else:

points.append(None)

return points

def getHandROI(self,imgfile,bonepoints):

"""hand手部感兴趣的区域寻找到双手图像

:param 图像路径,骨骼关键点

:return 左手关键点,右手关键点坐标集合

"""

img_cv2 = cv2.imread(imgfile)#原图像

img_height, img_width, _ = img_cv2.shape

rimg = img_cv2.copy()#图像备份

limg = img_cv2.copy()

# 以右手首为中心,裁剪长度为小臂长的图片

if bonepoints[4] and bonepoints[3]:#右手

h = int(self.__distance(bonepoints[4],bonepoints[3]))#小臂长

x_center = bonepoints[4][0]

y_center = bonepoints[4][1]

x1 = x_center-h

y1 = y_center-h

x2 = x_center+h

y2 = y_center+h

print(x1,x2,x_center,y_center,y1,y2)

if x1img_width:

x2 = img_width

if y1img_height:

y2 = img_height

rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1]

if bonepoints[7] and bonepoints[6]:#左手

h = int(self.__distance(bonepoints[7],bonepoints[6]))#小臂长

x_center = bonepoints[7][0]

y_center = bonepoints[7][1]

x1 = x_center-h

y1 = y_center-h

x2 = x_center+h

y2 = y_center+h

print(x1,x2,x_center,y_center,y1,y2)

if x1img_width:

x2 = img_width

if y1img_height:

y2 = img_height

limg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1]

plt.figure(figsize=[10, 10])

plt.subplot(1, 2, 1)

plt.imshow(cv2.cvtColor(rimg, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.subplot(1, 2, 2)

plt.imshow(cv2.cvtColor(limg, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.show()

return rimg,limg

def getHandsKeypoints(self,rimg,limg):

"""双手图像分别获取特征点

:param 图像路径,骨骼关键点

:return 左手关键点,右手关键点坐标集合

"""

# 分别获取手部特征点

rhandpoints = self.getOneHandKeypoints(rimg)

lhandpoints = self.getOneHandKeypoints(limg)

#显示

pose_model.vis_hand_pose(rimg, rhandpoints)

pose_model.vis_hand_pose(limg, lhandpoints)

return rhandpoints,lhandpoints

def vis_hand_heatmaps(self, handimg, net_outputs):

"""显示手势关键点热力图(单手)

:param 图像路径,神经网络

"""

plt.figure(figsize=[10, 10])

for pdx in range(self.hand_num_points):

probMap = net_outputs[0, pdx, :, :]

probMap = cv2.resize(probMap, (handimg.shape[1], handimg.shape[0]))

plt.subplot(5, 5, pdx+1)

plt.imshow(cv2.cvtColor(handimg, cv2.COLOR_BGR2RGB))

plt.imshow(probMap, alpha=0.6)

plt.colorbar()

plt.axis("off")

plt.show()

def vis_hand_pose(self, handimg, points):

"""显示标注手势关键点后的图像(单手)

:param 图像路径,每只手检测关键点坐标

"""

img_cv2_copy = np.copy(handimg)

for idx in range(len(points)):

if points[idx]:

cv2.circle(img_cv2_copy, points[idx], 2, (0, 255, 255), thickness=-1,lineType=cv2.FILLED)

cv2.putText(img_cv2_copy, "{}".format(idx), points[idx], cv2.FONT_HERSHEY_SIMPLEX,0.3,

(0, 0, 255), 1, lineType=cv2.LINE_AA)

# Draw Skeleton

for pair in self.hand_point_pairs:

partA = pair[0]

partB = pair[1]

if points[partA] and points[partB]:

cv2.line(handimg, points[partA], points[partB], (0, 255, 255), 2)

cv2.circle(handimg, points[partA], 2, (0, 0, 255), thickness=-1, lineType=cv2.FILLED)

plt.figure(figsize=[10, 10])

plt.subplot(1, 2, 1)

plt.imshow(cv2.cvtColor(handimg, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.subplot(1, 2, 2)

plt.imshow(cv2.cvtColor(img_cv2_copy, cv2.COLOR_BGR2RGB))

plt.axis("off")

plt.show()

if __name__ == '__main__':

print("[INFO]Pose estimation.")

img_file = "images/letter/melon.jpg"

#

start = time.time()

modelpath = "models/"

pose_model = general_pose_model(modelpath)

print("[INFO]Model loads time: ", time.time() - start)

start = time.time()

bone_points = pose_model.getBoneKeypoints(img_file)

print("[INFO]Model predicts time: ", time.time() - start)

pose_model.vis_bone_pose(img_file, bone_points)

print("骨骼关键距离信息: ")

DistanceList = pose_model.bonepointDistance(bone_points)

print(DistanceList)

print("骨骼关键角度信息: ")

AngleList = pose_model.bonepointAngle(bone_points)

print(AngleList)

# 手势

rimg,limg = pose_model.getHandROI(img_file,bone_points)# 左右手图像

rhandpoints,lhandpoints = pose_model.getHandsKeypoints(rimg,limg)#特征点

print("左右手关键点信息: ")

print(rhandpoints)

print(lhandpoints)

|

前文讲述了安装和基本使用方法,本文来将骨骼和手势融合在一张图片中同时检测并标记。

前文讲述了安装和基本使用方法,本文来将骨骼和手势融合在一张图片中同时检测并标记。 本次我需要手语图像识别,那么这里需要标记的上半身参考点的关键信息更多了。

本次我需要手语图像识别,那么这里需要标记的上半身参考点的关键信息更多了。 (图片参考于:中国手语数据集DEVISIGN 数据集以及手语公益网站视频)

(图片参考于:中国手语数据集DEVISIGN 数据集以及手语公益网站视频) 图像识别,需要以上流程,目前我们在(特征提取和选择阶段),提取哪些特征是有价值的特征呢?

图像识别,需要以上流程,目前我们在(特征提取和选择阶段),提取哪些特征是有价值的特征呢? 原作者笔记:十五组人体姿态骨骼关键点之间的距离作为特征,同时选择 了十五组夹角作为角度特征整合到一个数组中去。

原作者笔记:十五组人体姿态骨骼关键点之间的距离作为特征,同时选择 了十五组夹角作为角度特征整合到一个数组中去。  为什么需要角度作为辅助判断参数呢?

为什么需要角度作为辅助判断参数呢? 现在特征点也规划好了,上代码——在其中连线,圈圈画画,计算长度角度。

现在特征点也规划好了,上代码——在其中连线,圈圈画画,计算长度角度。

手势检测:

手势检测:  (当然这张图片里的dancer戴了手套,会影响手势部分的检测。) 此部分代码: 1. 在裁剪的时候注意左右相反的,我一开始弄错了,裁剪框老不对 rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1] 2. 注意判断图像中没有显示左手和右手的情况,以及标注框范围过大时,只裁取到图像边缘:

(当然这张图片里的dancer戴了手套,会影响手势部分的检测。) 此部分代码: 1. 在裁剪的时候注意左右相反的,我一开始弄错了,裁剪框老不对 rimg = img_cv2[y1:y2,x1:x2]# 裁剪坐标为[y0:y1, x0:x1] 2. 注意判断图像中没有显示左手和右手的情况,以及标注框范围过大时,只裁取到图像边缘:

关于角B的计算公式:

关于角B的计算公式:  、

、 其中,我们计算先根据三个点的坐标信息,计算各边长;再计算余弦的弧度值:

其中,我们计算先根据三个点的坐标信息,计算各边长;再计算余弦的弧度值:  弧度值转角度值:math.degrees(),均可(这里为了我方便观察,我暂时转换了,实际运用时,不用转换,多一份计算,减慢一分运行速度!)

弧度值转角度值:math.degrees(),均可(这里为了我方便观察,我暂时转换了,实际运用时,不用转换,多一份计算,减慢一分运行速度!) 为了演示,做了部分特征值参数显示,大致就是这个意思,这些数据都需要保存收集,为后面生成模型和识别做准备。

为了演示,做了部分特征值参数显示,大致就是这个意思,这些数据都需要保存收集,为后面生成模型和识别做准备。