| #教计算机学画卡通人物#生成式对抗神经网络GAN原理、Tensorflow搭建网络生成卡通人脸 | 您所在的位置:网站首页 › 搭建卡通 › #教计算机学画卡通人物#生成式对抗神经网络GAN原理、Tensorflow搭建网络生成卡通人脸 |

#教计算机学画卡通人物#生成式对抗神经网络GAN原理、Tensorflow搭建网络生成卡通人脸

|

生成式对抗神经网络GAN原理、Tensorflow搭建网络生成卡通人脸

下面这张图是我教计算机学画画,计算机学会之后画出来的,具体实现在下面。 ▲GAN原理 ●GAN的主要灵感来源于博弈论中零和博弈的思想,应用到深度学习神经网络上来说,通过生成器G(Generator)和判别器D(Discriminator)不断博弈,从而使G学习到真实的数据分布,如果用到图片生成上,则训练完成后,G可以从一组随机数中生成逼真的图像。 ●激活函数选择 D网络除了最后一层输出不激活,其他用Relu激活 G网络最后一层用sigmoid激活,之前层用Relu激活 标准差stddev=0.01。 ▲变种之DCGAN ●DCGAN是继GAN 之后较好的改进,其改进主要是在网络结构上,到目前为止,DCGAN的网络结构还是被广泛应用,DCGAN极大地提升了GAN的训练稳定度和生成结果的质量。 ▲用Tensorflow框架搭建DCGAN生成卡通人脸 这是卡通人脸数据集 ●然后写DCGAN网络 下面是所有完整程序 import tensorflow as tf import PIL.Image as pimg import matplotlib.pyplot as plt import numpy as np from Samping import MyDataset class D_net: def __init__(self): self.w1=tf.Variable(tf.truncated_normal(shape=[5,5,3,64],stddev=0.02,dtype=tf.float32)) self.b1=tf.Variable(tf.zeros(shape=[64],dtype=tf.float32)) self.w2 = tf.Variable(tf.truncated_normal(shape=[5, 5, 64, 128], stddev=0.02, dtype=tf.float32)) self.b2 = tf.Variable(tf.zeros(shape=[128], dtype=tf.float32)) self.w3 = tf.Variable(tf.truncated_normal(shape=[5, 5, 128, 256], stddev=0.02, dtype=tf.float32)) self.b3 = tf.Variable(tf.zeros(shape=[256], dtype=tf.float32)) self.w4 = tf.Variable(tf.truncated_normal(shape=[5, 5, 256, 512], stddev=0.02, dtype=tf.float32)) self.b4 = tf.Variable(tf.zeros(shape=[512], dtype=tf.float32)) self.w5 = tf.Variable(tf.truncated_normal(shape=[6, 6, 512, 1], stddev=0.02, dtype=tf.float32)) self.b5 = tf.Variable(tf.zeros(shape=[1], dtype=tf.float32)) def forward(self,x): x=tf.reshape(x,[-1,96,96,3]) y1=tf.nn.leaky_relu(tf.nn.conv2d(x,self.w1,[1,2,2,1],padding="SAME")+self.b1)#48*48*64 y2=tf.nn.leaky_relu(tf.layers.batch_normalization(tf.nn.conv2d(y1,self.w2,[1,2,2,1],padding="SAME")+self.b2))#24*24*128 y3=tf.nn.leaky_relu(tf.layers.batch_normalization(tf.nn.conv2d(y2,self.w3,[1,2,2,1],padding="SAME")+self.b3))#*12*12*256 y4=tf.nn.leaky_relu(tf.layers.batch_normalization(tf.nn.conv2d(y3,self.w4,[1,2,2,1],padding="SAME")+self.b4))#6*6*512 y5=tf.nn.leaky_relu(tf.layers.batch_normalization(tf.nn.conv2d(y4,self.w5,[1,1,1,1],padding="VALID")+self.b5))#1*1*1 y5=tf.reshape(y5,[-1,1])#★★★★★ return y5 def params(self): return [self.w1,self.b1,self.w2,self.b2,self.w3,self.b3,self.w4,self.b4,self.w5,self.b5] class G_net: def __init__(self): self.w1 = tf.Variable(tf.truncated_normal(shape=[128,6*6*512], stddev=0.02, dtype=tf.float32)) self.b1 = tf.Variable(tf.zeros(shape=[6*6*512], dtype=tf.float32))#[None,6*6*512] self.w2 = tf.Variable(tf.truncated_normal(shape=[5,5,256,512], stddev=0.02, dtype=tf.float32)) self.b2 = tf.Variable(tf.zeros(shape=[256], dtype=tf.float32)) #[100,12,12,256] self.w3 = tf.Variable(tf.truncated_normal(shape=[5, 5, 128, 256], stddev=0.02, dtype=tf.float32)) self.b3 = tf.Variable(tf.zeros(shape=[128], dtype=tf.float32)) # [100,24,24,128] self.w4 = tf.Variable(tf.truncated_normal(shape=[5, 5, 64, 128], stddev=0.02, dtype=tf.float32)) self.b4 = tf.Variable(tf.zeros(shape=[64], dtype=tf.float32)) # 48*48*64 self.w5 = tf.Variable(tf.truncated_normal(shape=[5, 5, 3, 64], stddev=0.02, dtype=tf.float32)) self.b5 = tf.Variable(tf.zeros(shape=[3], dtype=tf.float32)) # 96*96*3 def forward(self,x):#x:[None,128] y1=tf.nn.relu(tf.layers.batch_normalization(tf.matmul(x,self.w1)+self.b1)) #[None,6*6*512] y1=tf.reshape(y1,[-1,6,6,512]) y2=tf.nn.relu(tf.layers.batch_normalization(tf.nn.conv2d_transpose( y1,self.w2,[100,12,12,256],strides=[1,2,2,1],padding="SAME")+self.b2))#[100,11,11,256] y3=tf.nn.relu(tf.layers.batch_normalization(tf.nn.conv2d_transpose( y2,self.w3,[100,24,24,128],strides=[1,2,2,1],padding="SAME")+self.b3))#[100,24,24,128] y4=tf.nn.tanh(tf.nn.conv2d_transpose( y3,self.w4,[100,48,48,64],strides=[1,2,2,1],padding="SAME")+self.b4)#48*48*64 y5 = tf.nn.tanh(tf.nn.conv2d_transpose( y4, self.w5, [100, 96, 96, 3], strides=[1, 2, 2, 1], padding="SAME") + self.b5) # [100, 96, 96, 3] return y5 def params(self): return [self.w1,self.b1,self.w2,self.b2,self.w3,self.b3,self.w4,self.b4,self.w5,self.b5] class Net: def __init__(self): self.fake_x=tf.placeholder(dtype=tf.float32,shape=[None,128]) self.real_x=tf.placeholder(dtype=tf.float32,shape=[None,96,96,3])#★★★ self.fake_label=tf.placeholder(dtype=tf.float32,shape=[None,1]) self.real_label=tf.placeholder(dtype=tf.float32,shape=[None,1]) self.d_net=D_net() self.g_net = G_net() def forward(self): self.g_fake_out=self.g_net.forward(self.fake_x) self.d_fake_out = self.d_net.forward(self.g_fake_out) self.d_real_out = self.d_net.forward(self.real_x) def loss(self): self.d_fake_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits( labels=self.fake_label, logits=self.d_fake_out)) self.d_real_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits( labels=self.real_label, logits=self.d_real_out)) self.d_loss = self.d_fake_loss + self.d_real_loss self.g_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits( labels=self.fake_label, logits=self.d_fake_out)) def backward(self): self.d_optimizer = tf.train.AdamOptimizer(0.0002, beta1=0.5).minimize(self.d_loss, var_list=self.d_net.params()) self.g_optimizer = tf.train.AdamOptimizer(0.0002, beta1=0.5).minimize(self.g_loss, var_list=self.g_net.params()) if __name__ == '__main__': net=Net() net.forward() net.loss() net.backward() mydataset=MyDataset() init=tf.global_variables_initializer() with tf.Session() as sess: sess.run(init) for i in range(50000): real_x=mydataset.get_batch(100) real_label=np.ones([100,1]) fake_x=np.random.uniform(-1,1,(100,128)) fake_label=np.zeros([100,1]) D_loss, _ = sess.run([net.d_loss, net.d_optimizer], feed_dict={net.fake_x: fake_x, net.fake_label: fake_label, net.real_x: real_x, net.real_label: real_label}) fake_xs=np.random.uniform(-1,1,(100,128)) fake_labels=np.ones([100,1]) sess.run([net.g_loss, net.g_optimizer],#★★★★ feed_dict={net.fake_x: fake_xs, net.fake_label: fake_labels}) G_loss, _ = sess.run([net.g_loss, net.g_optimizer], feed_dict={net.fake_x: fake_xs, net.fake_label: fake_labels}) if i % 10==0: fake_xss=np.random.uniform(-1,1,(100,128)) array=sess.run(net.g_fake_out,feed_dict={net.fake_x:fake_xss})##[100,96,96,3] img_array=np.reshape(array[0],[96,96,3])#★★★ plt.imshow(img_array) plt.pause(0.1) print("d_loss", D_loss) print("g_loss", G_loss)▲结果:下面的图就是计算机画出来的,怎么样? |

【本文地址】

▲以下是对GAN形象化地表述 ●赵某不务正业、游手好闲,却整天做着发财梦。有一天,他突发奇想,准备用造假币来实现他的“梦想”。第一次,他造了一张假币,去超市买东西,但是由于第一次造假币,手法比较粗糙,一下就被收银员识破。于是,他改进了技术,又一次造出了假币,这次收银员并没有马上发现这是假币,不料却被验钞机检验了出来。赵某不甘心,这次斥巨资购买了“专业设备”,果然,这次不仅骗过了收银员,连验钞机也没有检测出来。 ●在上面的例子中,赵某不断和收银员和验钞机在技术上对抗,最终的结果是赵某愈挫愈勇,最后达到以假乱真的效果,实际上GAN就是这么一个过程。这里面有两个核心内容:*1.*赵某造假币,2.假币不断和真币比较,接受检验。 ●在GAN中,1赵某造假币就是生成器(Generator),2假币不断和真币比较,接受检验就是判别器(Discriminator),生成器和判别器两者相互博弈。

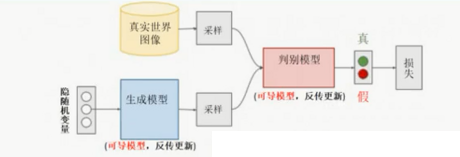

▲以下是对GAN形象化地表述 ●赵某不务正业、游手好闲,却整天做着发财梦。有一天,他突发奇想,准备用造假币来实现他的“梦想”。第一次,他造了一张假币,去超市买东西,但是由于第一次造假币,手法比较粗糙,一下就被收银员识破。于是,他改进了技术,又一次造出了假币,这次收银员并没有马上发现这是假币,不料却被验钞机检验了出来。赵某不甘心,这次斥巨资购买了“专业设备”,果然,这次不仅骗过了收银员,连验钞机也没有检测出来。 ●在上面的例子中,赵某不断和收银员和验钞机在技术上对抗,最终的结果是赵某愈挫愈勇,最后达到以假乱真的效果,实际上GAN就是这么一个过程。这里面有两个核心内容:*1.*赵某造假币,2.假币不断和真币比较,接受检验。 ●在GAN中,1赵某造假币就是生成器(Generator),2假币不断和真币比较,接受检验就是判别器(Discriminator),生成器和判别器两者相互博弈。 ●包含一个生成模型 G和一个判别模型D,对于判别模型来说,就是要把上面的真实图像判别为1,把下面的生成模型判别为0;但是对于生成模型来说,就是要把生产的分布判别为1,这样才能学习;由此产生了一个生成模型和判别模型的博弈。

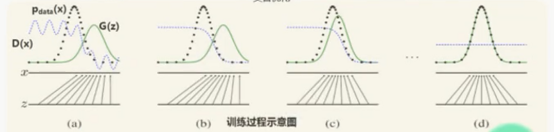

●包含一个生成模型 G和一个判别模型D,对于判别模型来说,就是要把上面的真实图像判别为1,把下面的生成模型判别为0;但是对于生成模型来说,就是要把生产的分布判别为1,这样才能学习;由此产生了一个生成模型和判别模型的博弈。  训练过程示意图

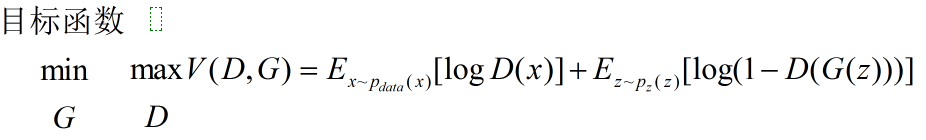

训练过程示意图  生成模型G:将一个随机变量z映射到数据空间,最小化log(1-D(G(x))) 判别模型D:最大化分配给训练数据x和生成数据G(z)正确标签的概率。 GAN所建立的一个学习框架,实际上就是生成模型和判别模型之间的一个模仿游戏。生成模型的目的就是要尽量模仿和学习真实数据的分布;判别模型的目的就是判断自己得到的一个输入数据究竟是来自真实的数据分布还是一个生成模型。

生成模型G:将一个随机变量z映射到数据空间,最小化log(1-D(G(x))) 判别模型D:最大化分配给训练数据x和生成数据G(z)正确标签的概率。 GAN所建立的一个学习框架,实际上就是生成模型和判别模型之间的一个模仿游戏。生成模型的目的就是要尽量模仿和学习真实数据的分布;判别模型的目的就是判断自己得到的一个输入数据究竟是来自真实的数据分布还是一个生成模型。  ●损失函数的构造思路 对于生成器来说,他认为生成模型的标签label=1,因为真实数据标签label=1,这样才能学习;对于判别模型来说,它认为生成器生成的数据标签label=0,真实数据的标签label=1; 生成器认为生成数据label=1,或者趋于1; 判别器认为生成数据label=0,或者趋于0; 这就生成了对抗,到最后生成数据很优秀时,两者可能都是0.5。 loss=label-out,学习好后,就不需要判别器了,直接用生成器。

●损失函数的构造思路 对于生成器来说,他认为生成模型的标签label=1,因为真实数据标签label=1,这样才能学习;对于判别模型来说,它认为生成器生成的数据标签label=0,真实数据的标签label=1; 生成器认为生成数据label=1,或者趋于1; 判别器认为生成数据label=0,或者趋于0; 这就生成了对抗,到最后生成数据很优秀时,两者可能都是0.5。 loss=label-out,学习好后,就不需要判别器了,直接用生成器。 ●DCGAN的生成器网络结构如图所示,相较于GAN,DCGAN几乎全部使用了卷积层代替全连接层,判别器几乎是和生成器对称的,从上图可以看出,G网络没有Pooling层,使用了转置卷积(Conv2d_transpose)并且步长大于等于2进行上采样。 ●DCGAN网络结构设计要点 1.在D网络中用strided卷积(strided>1)代替pooling层,在G网络中,Conv2d_transpose代替上采样层; 2.G和D网络中直接将batch_normallization(归一化)应用到所有层会导致样本震荡和模型不稳定,通过在generator输出层和discriminator输入层不采用batch_normallization可以防止这种现象; 3.G网络除了输入层用tanh,其他都用Relu激活; 4.D网络都只用LeakyRelu; 5.不适用全连接层作为输出; ●DCGAN训练细节 1.预处理环节,将图像scale到tanh的[-1,1]; 2.所有参数初始化由(0,0.02)的正态分布中随机得到; 3.leakyRelu的斜率为0.2(默认); 4.优化器使用预先调好超参的Adam optimizer; 5.learning rate = 0.0002(Adam优化器的参数); 6.Adamoptimizer(0.0002,betal=0.5); 7.将momentum的参数beta从0.9降到0.5来防止震荡和不稳定; 8.用5×5的卷积核尽可能多的取数据;

●DCGAN的生成器网络结构如图所示,相较于GAN,DCGAN几乎全部使用了卷积层代替全连接层,判别器几乎是和生成器对称的,从上图可以看出,G网络没有Pooling层,使用了转置卷积(Conv2d_transpose)并且步长大于等于2进行上采样。 ●DCGAN网络结构设计要点 1.在D网络中用strided卷积(strided>1)代替pooling层,在G网络中,Conv2d_transpose代替上采样层; 2.G和D网络中直接将batch_normallization(归一化)应用到所有层会导致样本震荡和模型不稳定,通过在generator输出层和discriminator输入层不采用batch_normallization可以防止这种现象; 3.G网络除了输入层用tanh,其他都用Relu激活; 4.D网络都只用LeakyRelu; 5.不适用全连接层作为输出; ●DCGAN训练细节 1.预处理环节,将图像scale到tanh的[-1,1]; 2.所有参数初始化由(0,0.02)的正态分布中随机得到; 3.leakyRelu的斜率为0.2(默认); 4.优化器使用预先调好超参的Adam optimizer; 5.learning rate = 0.0002(Adam优化器的参数); 6.Adamoptimizer(0.0002,betal=0.5); 7.将momentum的参数beta从0.9降到0.5来防止震荡和不稳定; 8.用5×5的卷积核尽可能多的取数据; ●首先写一个图片采样程序,每次拿100张图片

●首先写一个图片采样程序,每次拿100张图片 这个网络的训练时间较长,如果用一般性能的CPU训练要4个小时能看到卡通人脸轮廓,如果用GPU训练则较快。

这个网络的训练时间较长,如果用一般性能的CPU训练要4个小时能看到卡通人脸轮廓,如果用GPU训练则较快。