|

小白学Pytorch系列–Torch.nn API Transformer Layers(9)

方法注释nn.TransformerTransformer模型。nn.TransformerEncoderTransformerEncoder是N个编码器层的堆栈。nn.TransformerDecoderTransformerDecoder是N个解码器层的堆栈nn.TransformerEncoderLayerTransformerEncoderLayer 由自注意网络和前馈网络组成。nn.TransformerDecoderLayerTransformerDecoderLayer由自注意网络、多头注意网络和前馈网络组成。

解读参考: https://blog.csdn.net/qq_43645301/article/details/109279616

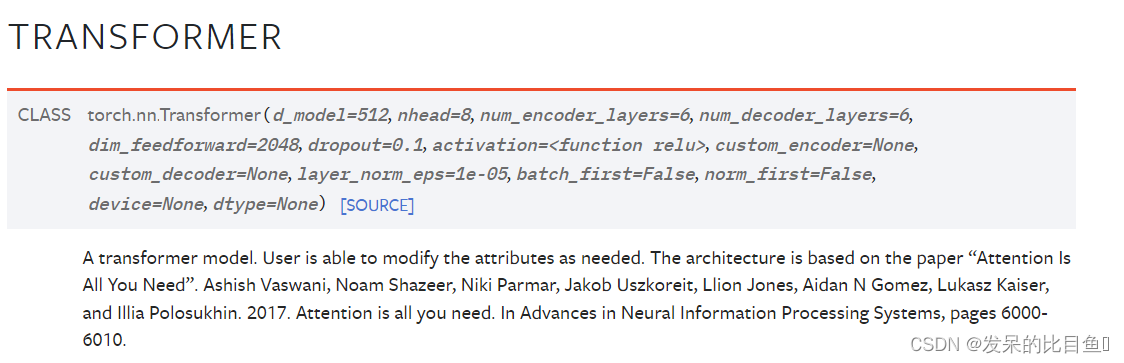

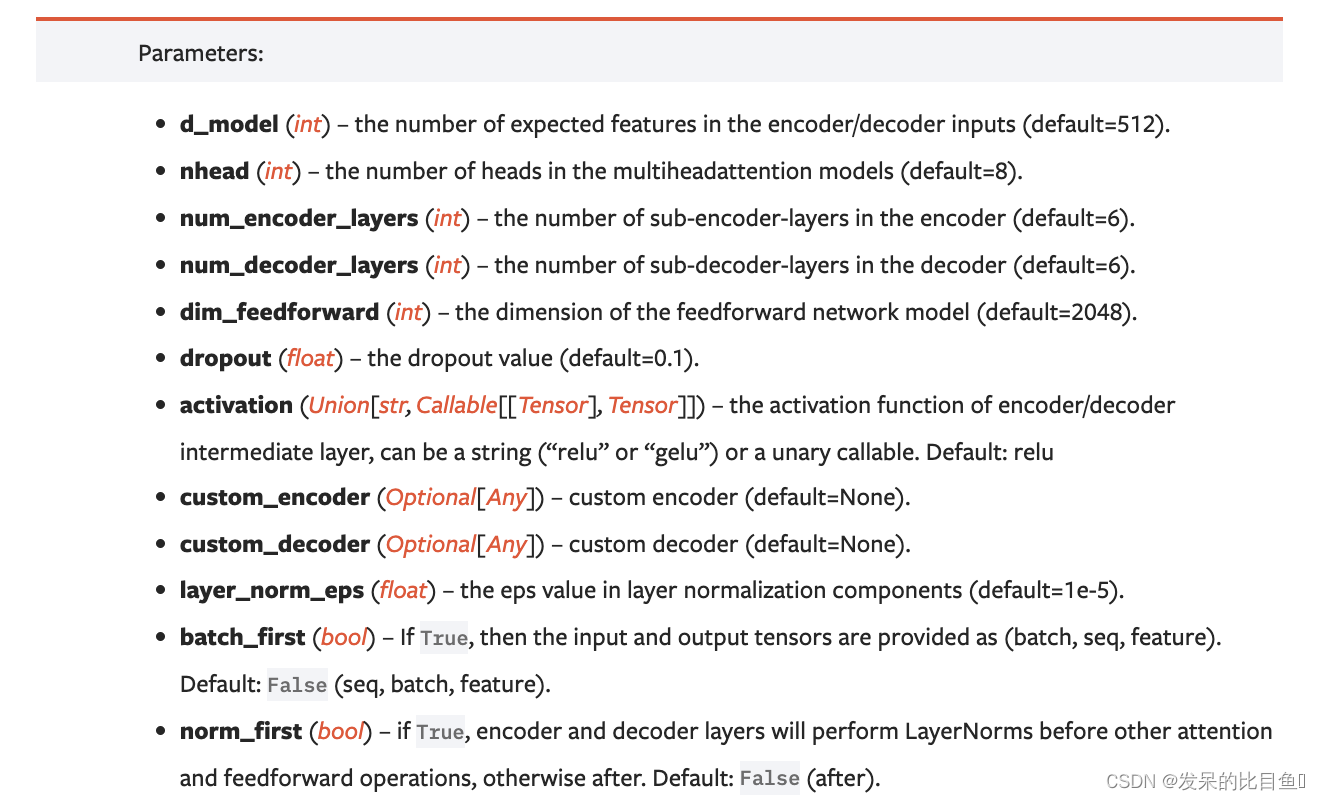

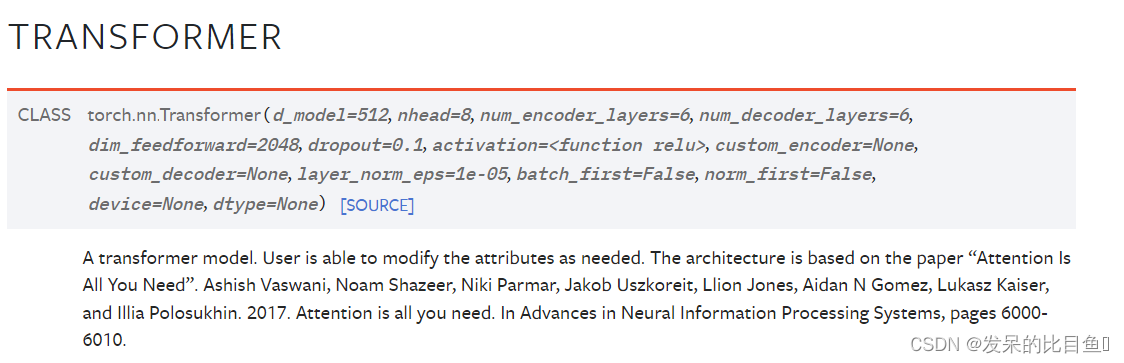

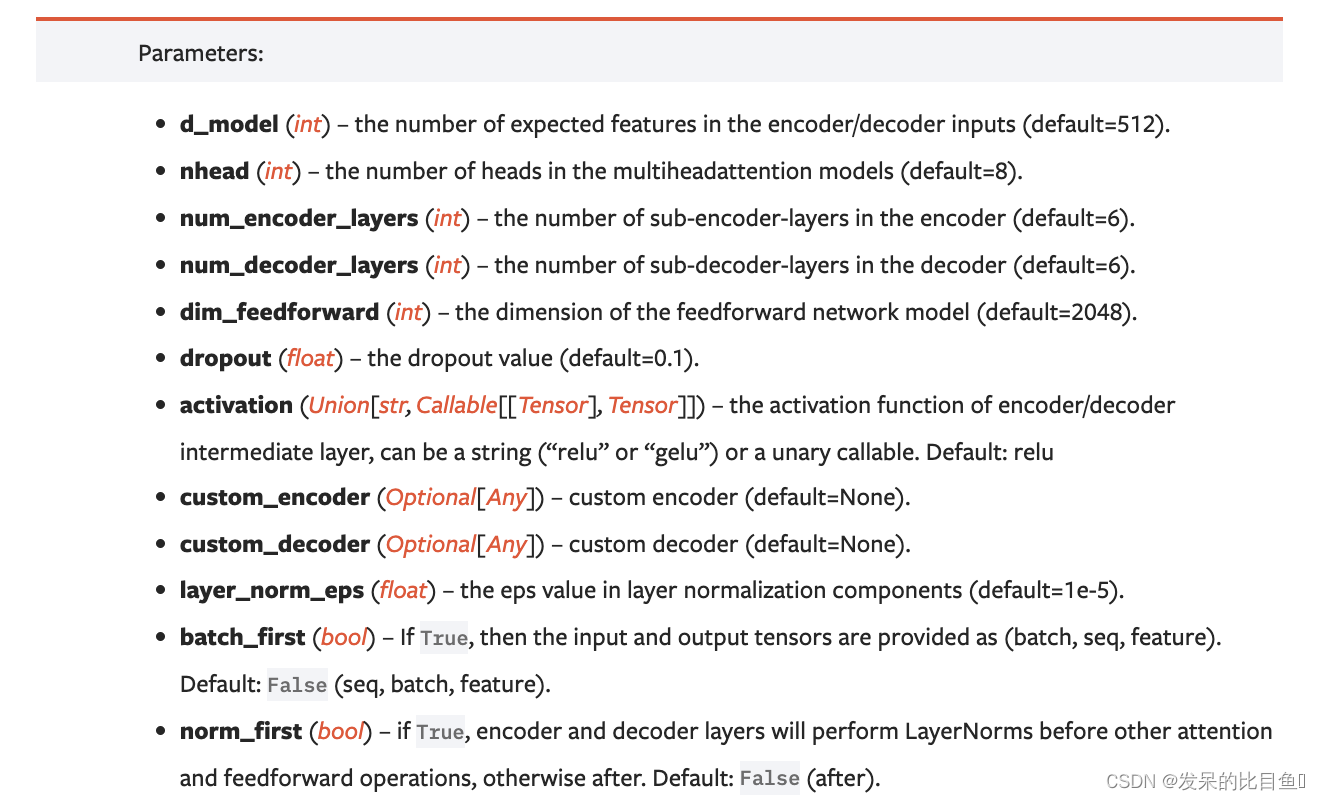

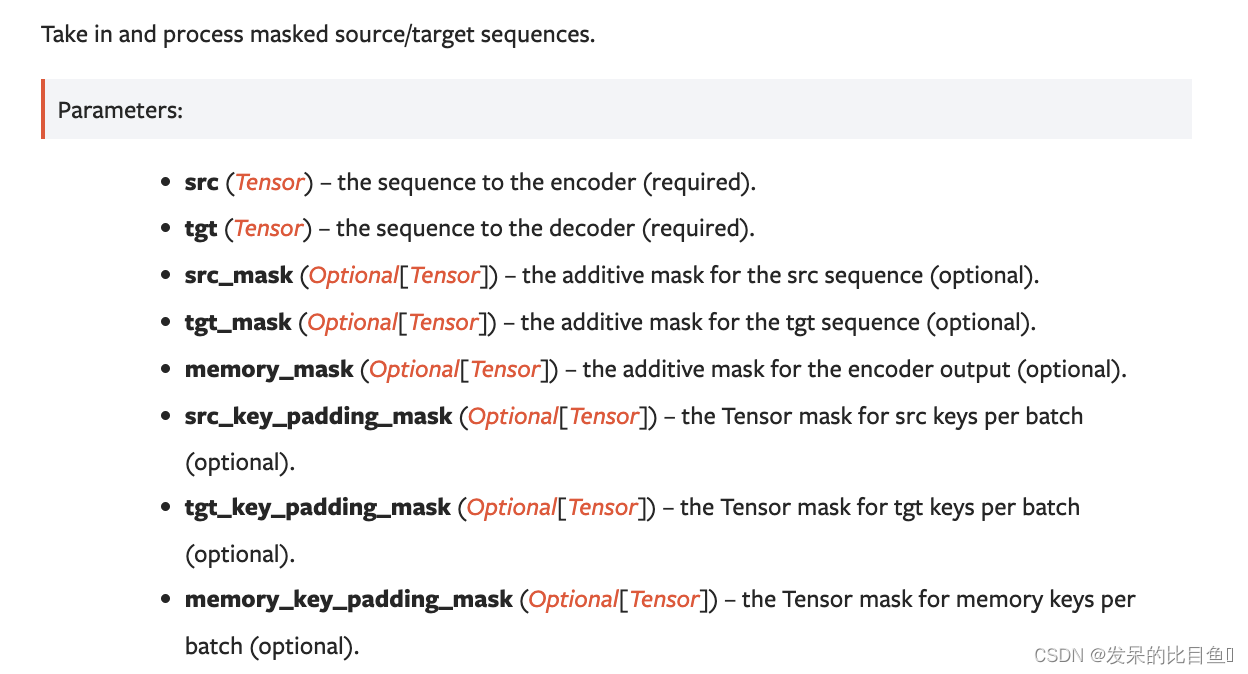

nn.Transformer

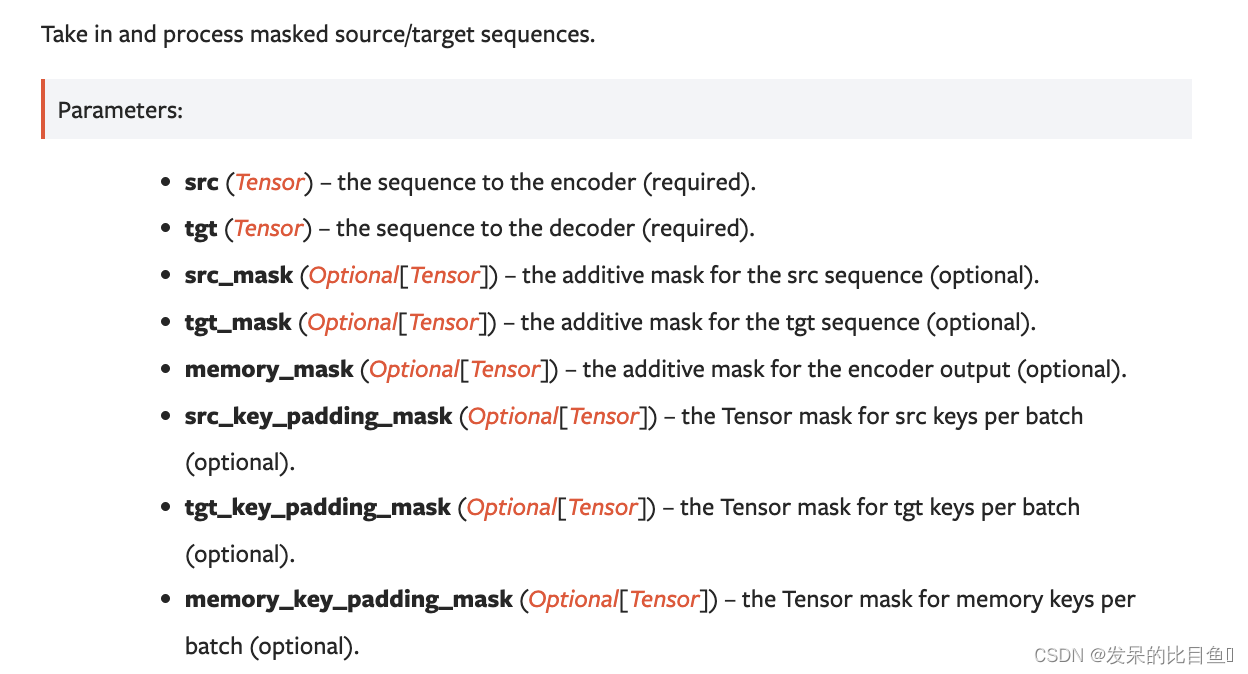

>>> transformer_model = nn.Transformer(nhead=16, num_encoder_layers=12)

>>> src = torch.rand((10, 32, 512))

>>> tgt = torch.rand((20, 32, 512))

>>> out = transformer_model(src, tgt)

output = transformer_model(src, tgt, src_mask=src_mask, tgt_mask=tgt_mask)

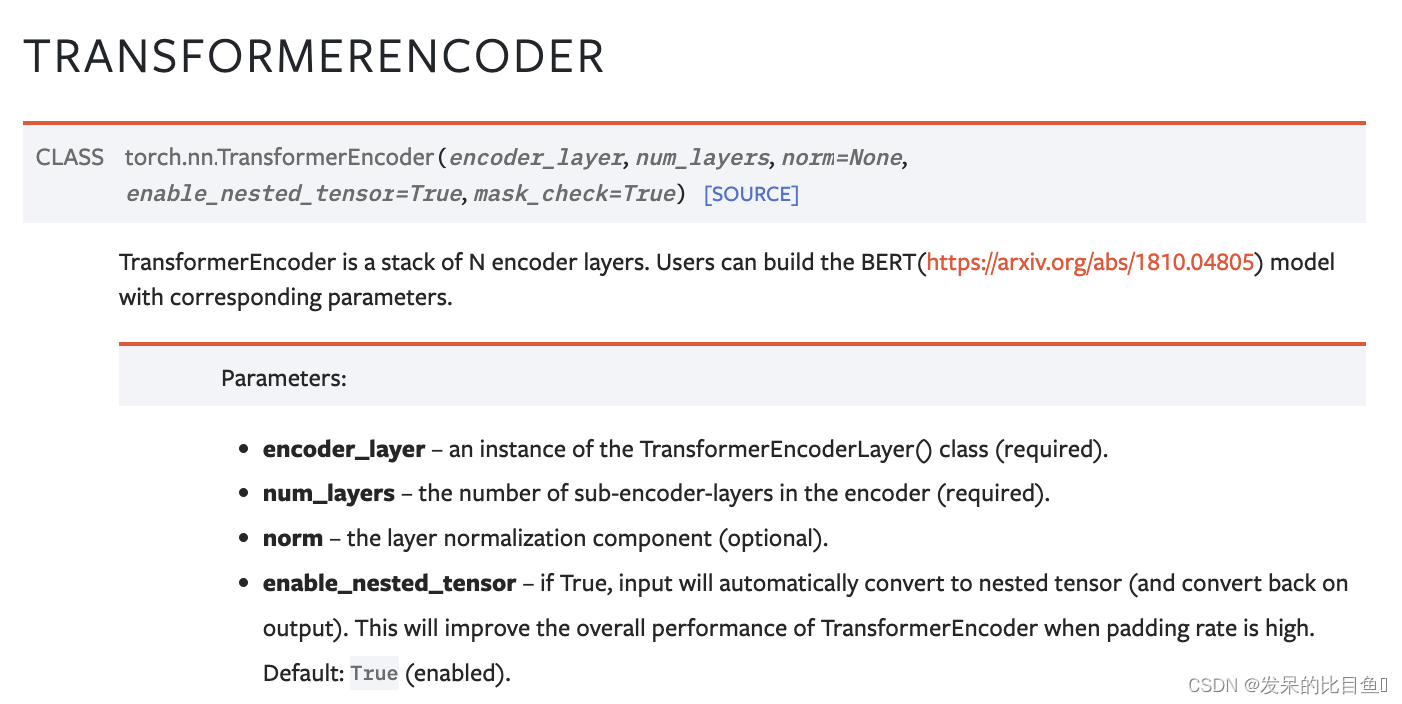

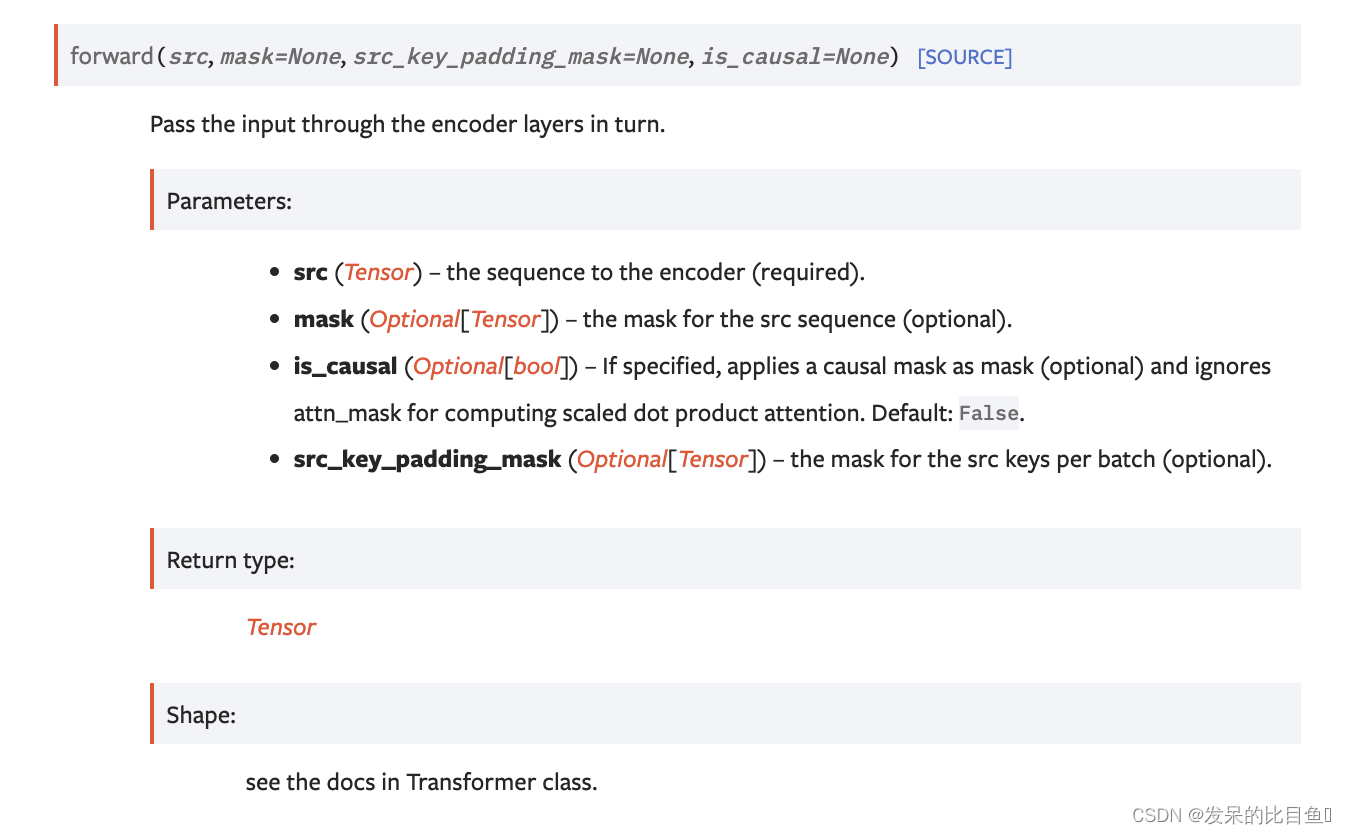

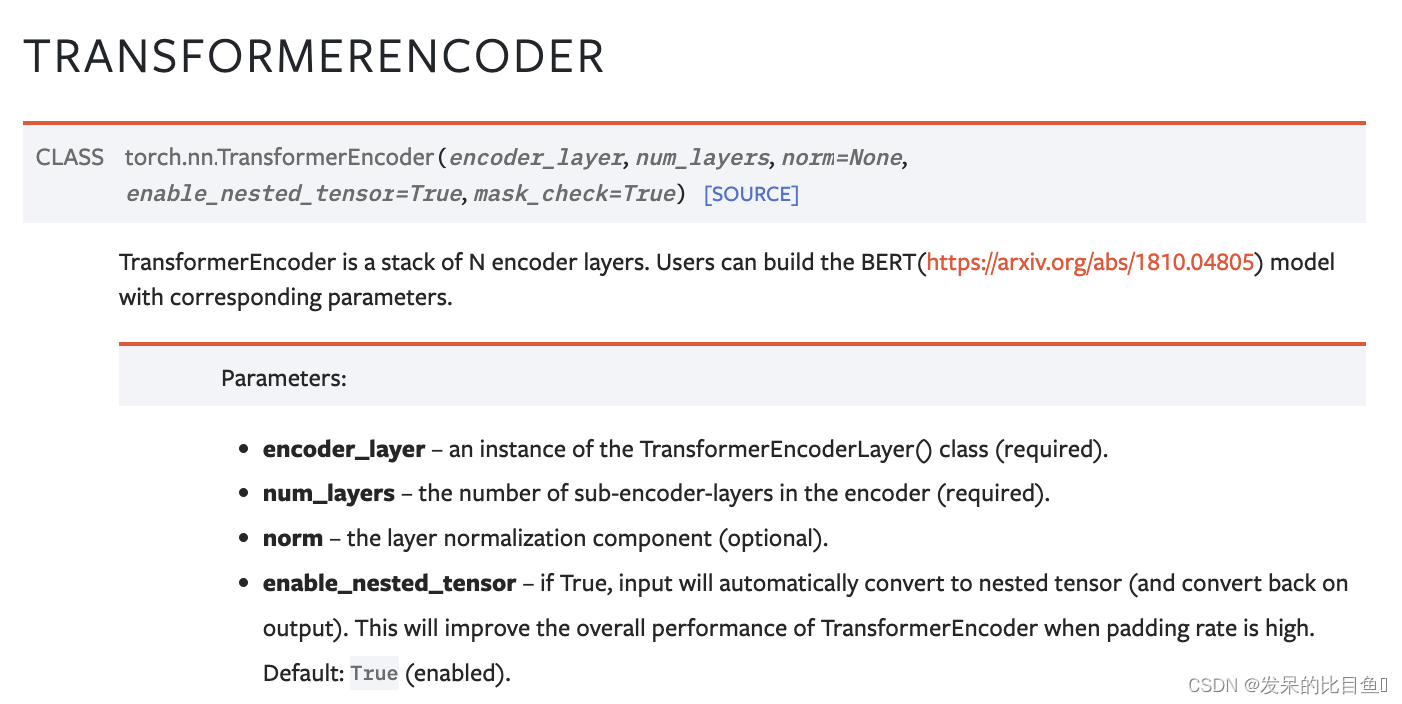

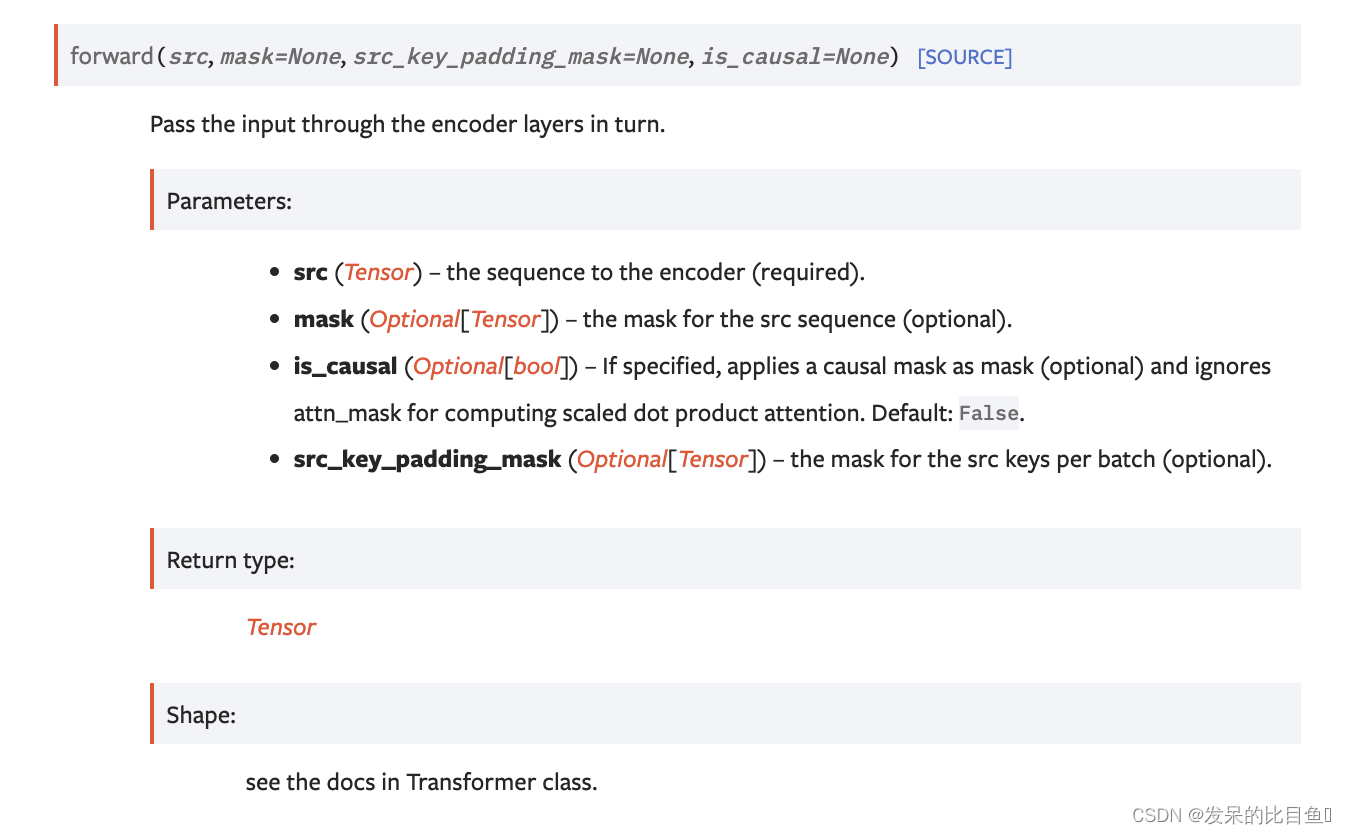

nn.TransformerEncoder

TransformerEncoder是一个由N个编码器层组成的堆栈。用户可以构建BERT(https://arxiv.org/abs/1810.04805)具有相应参数的模型。

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8)

>>> transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=6)

>>> src = torch.rand(10, 32, 512)

>>> out = transformer_encoder(src)

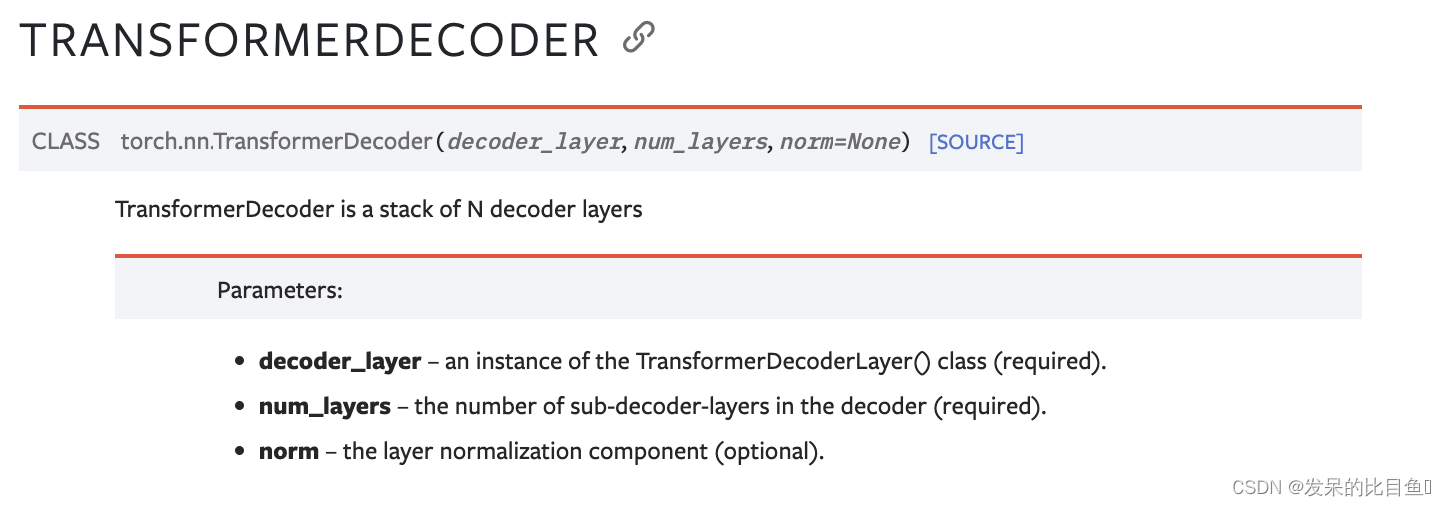

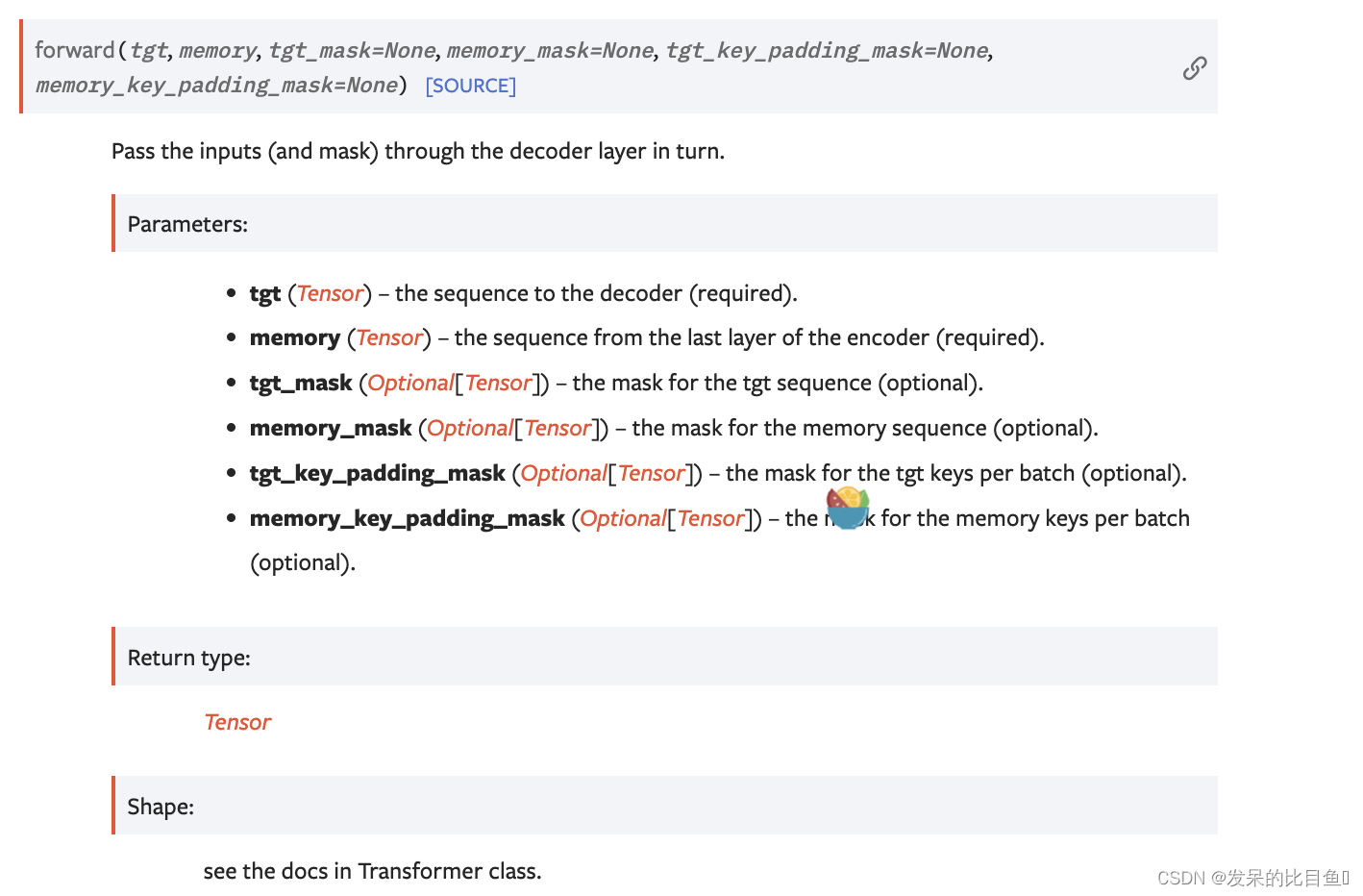

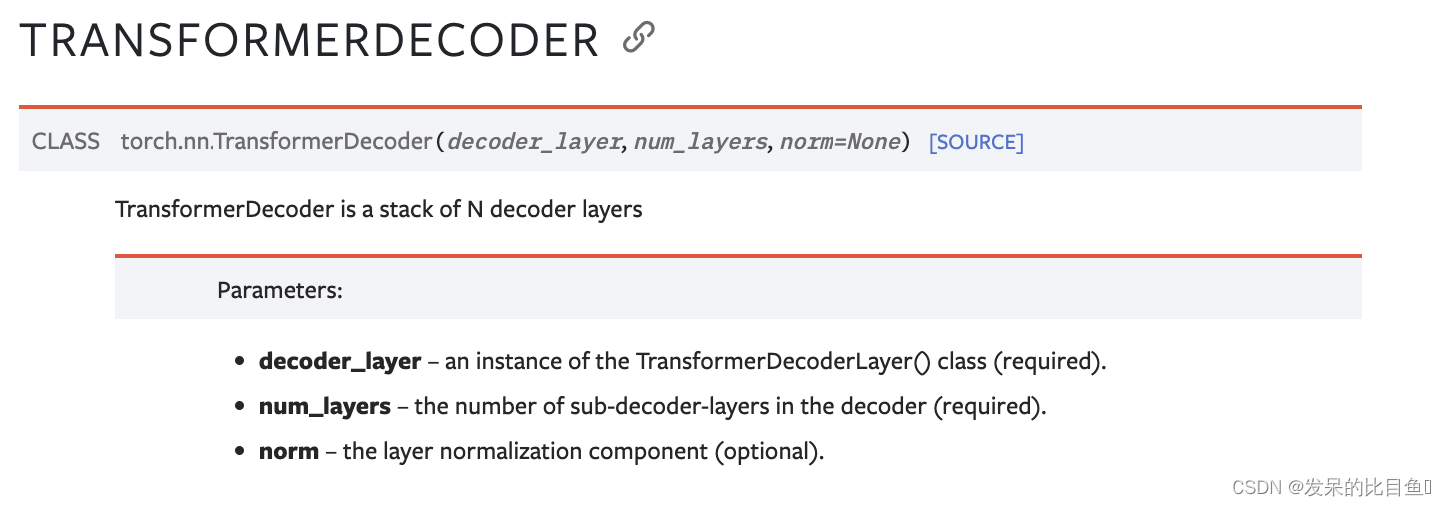

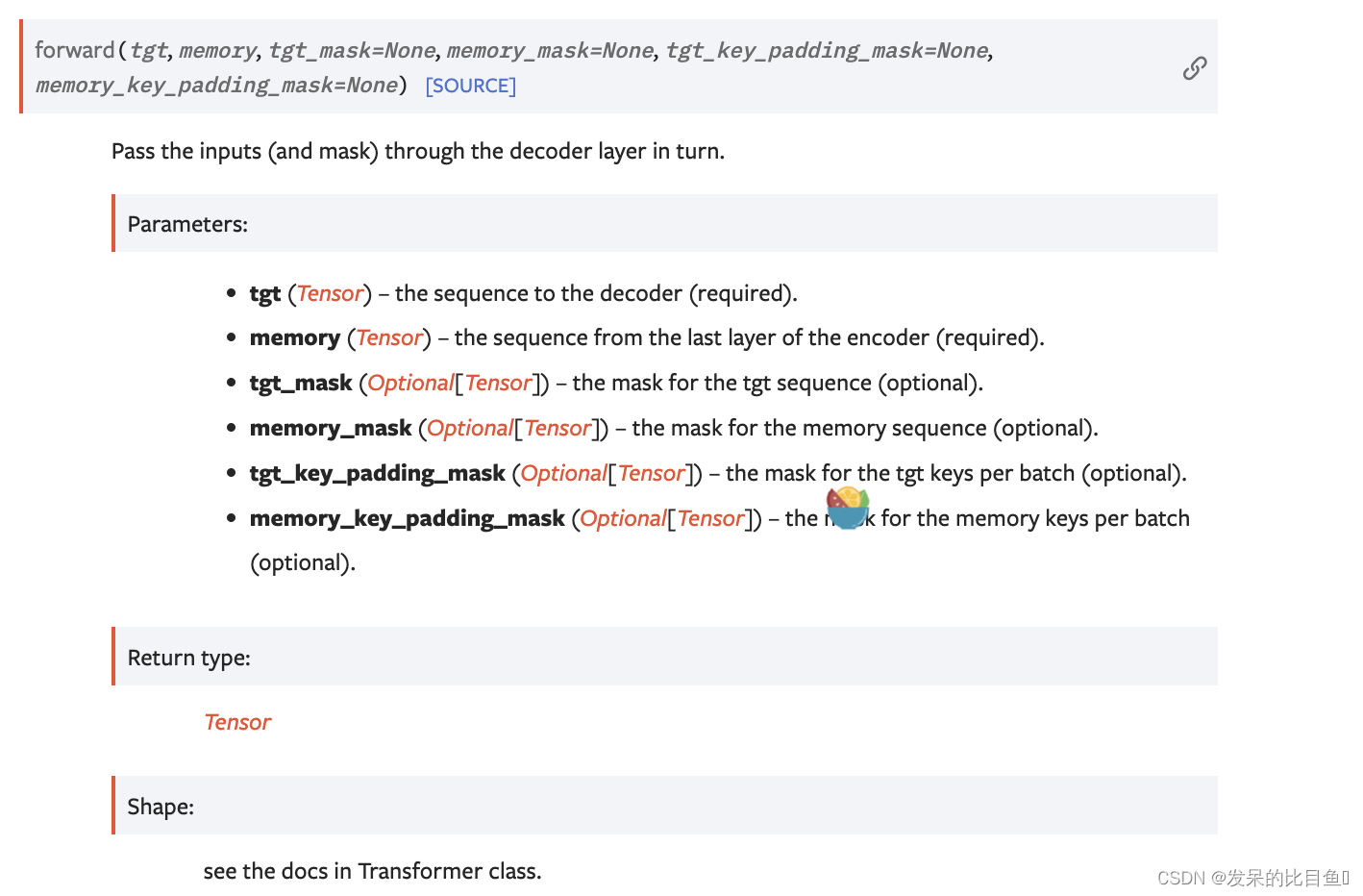

nn.TransformerDecoder

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8)

>>> transformer_decoder = nn.TransformerDecoder(decoder_layer, num_layers=6)

>>> memory = torch.rand(10, 32, 512)

>>> tgt = torch.rand(20, 32, 512)

>>> out = transformer_decoder(tgt, memory)

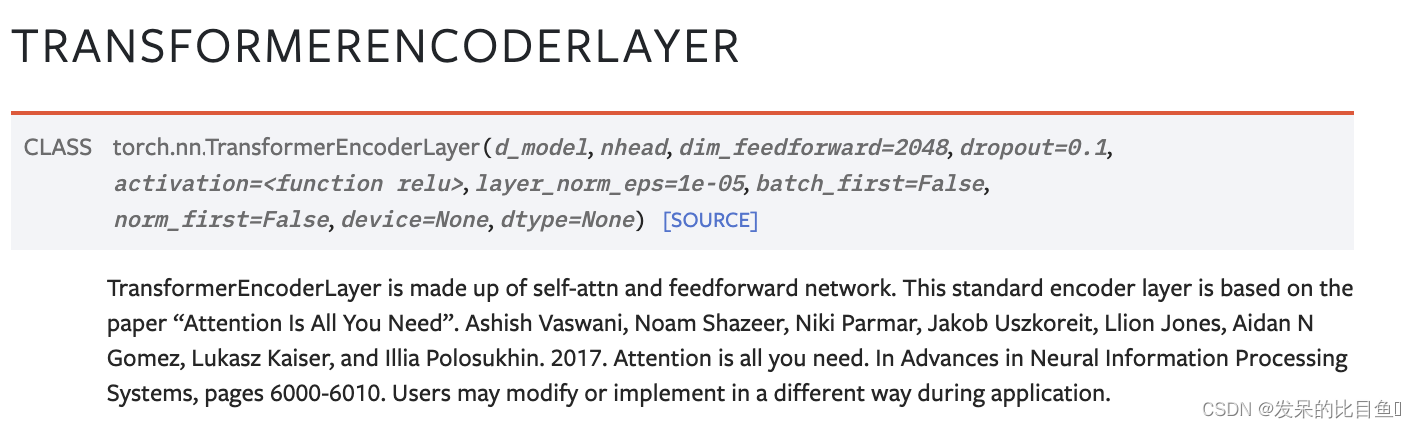

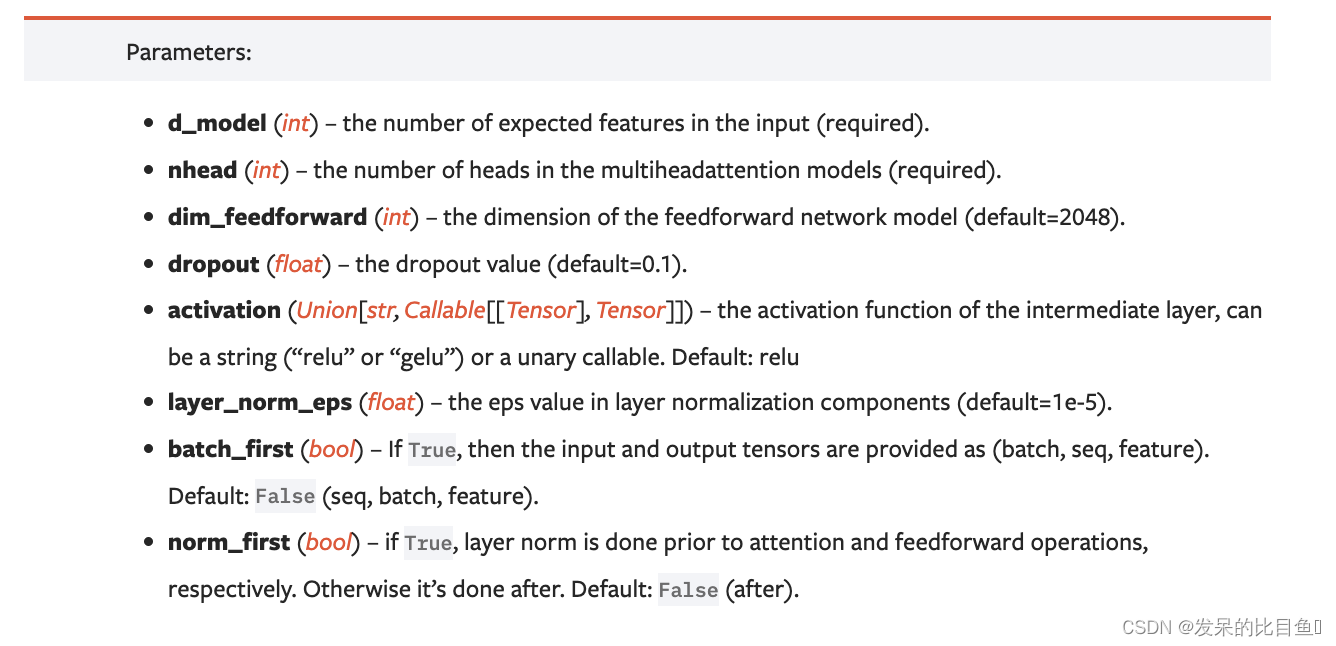

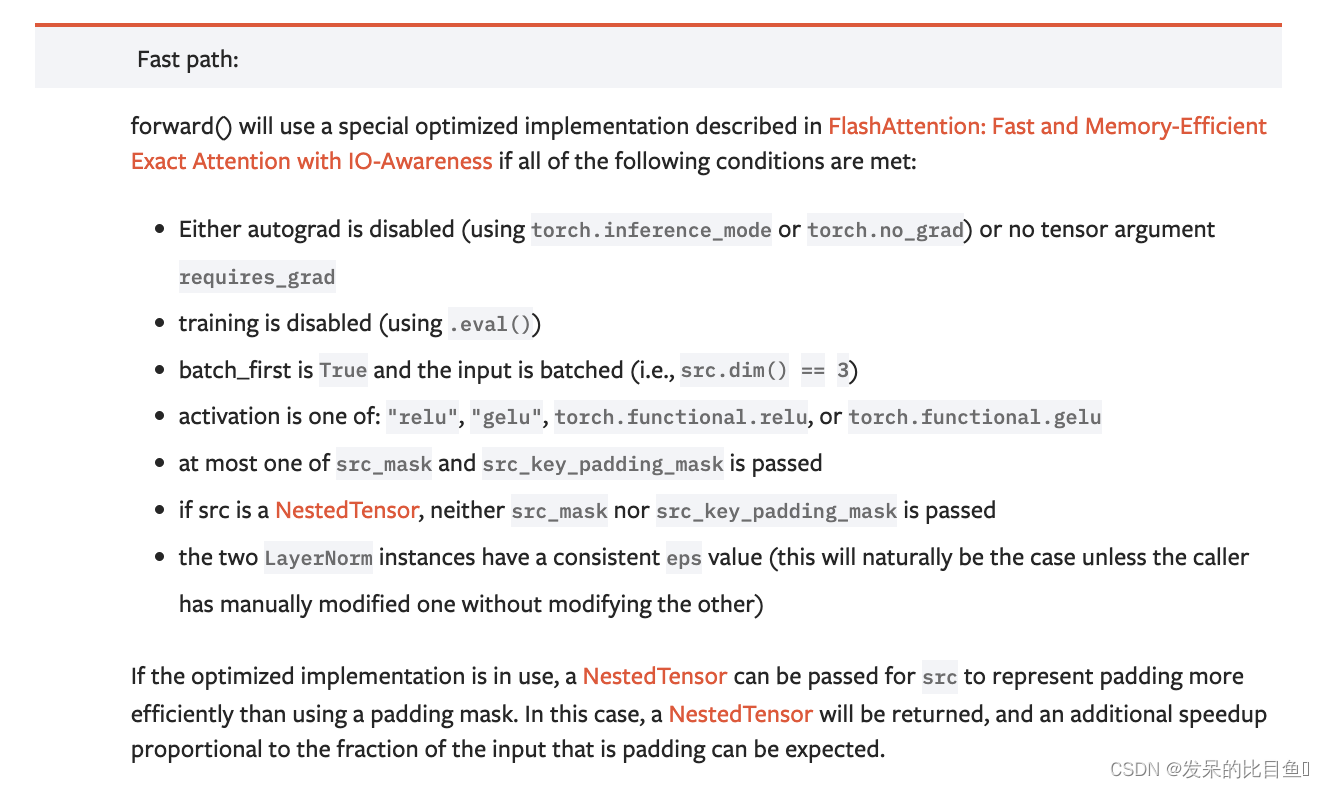

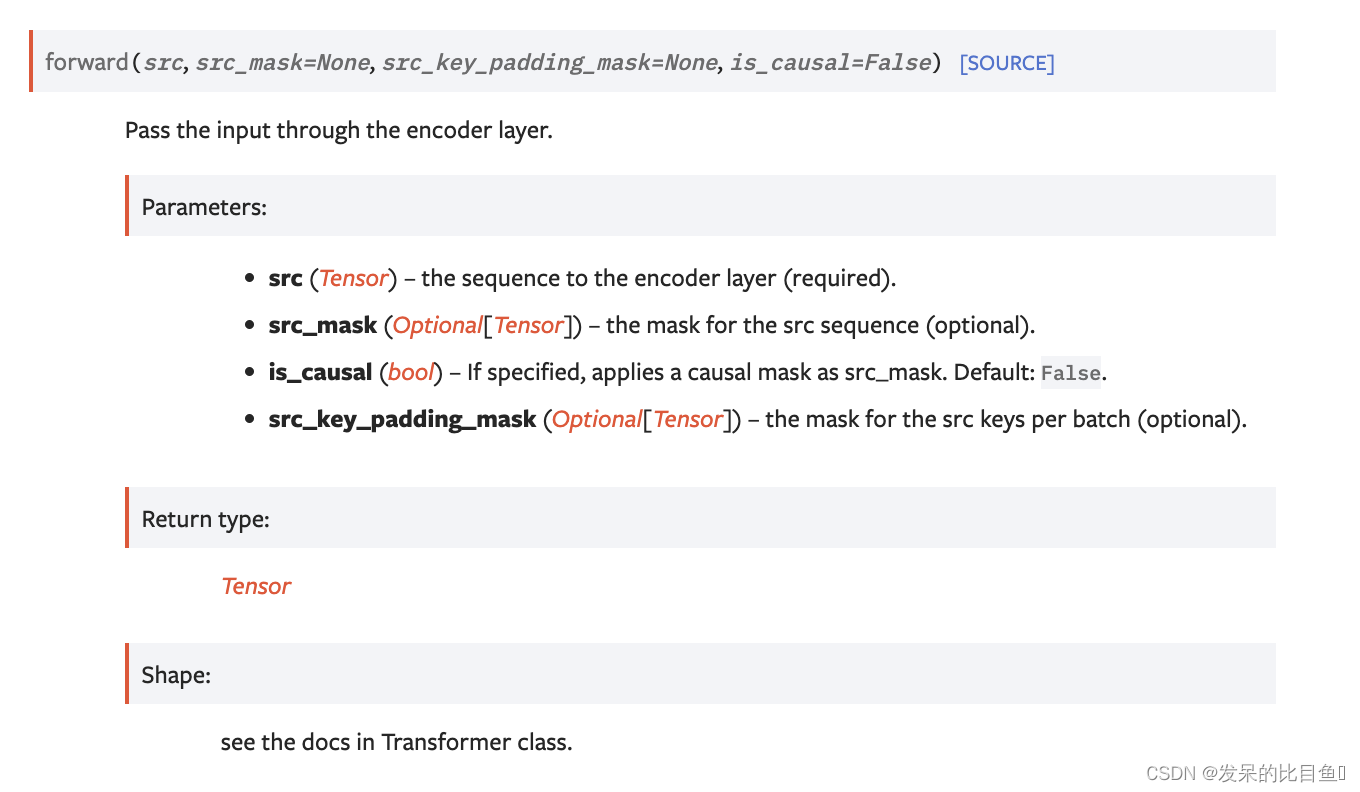

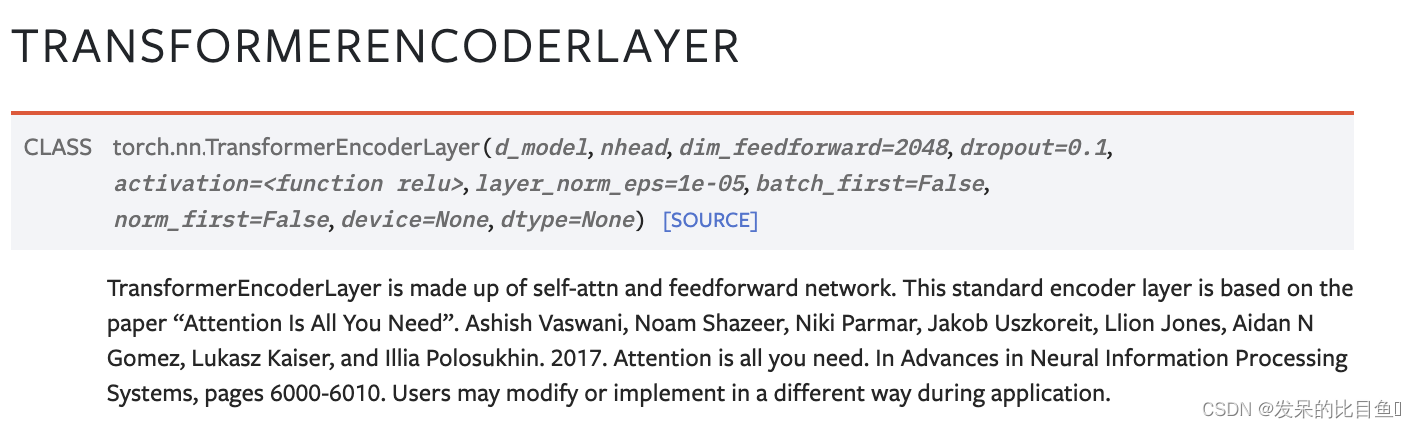

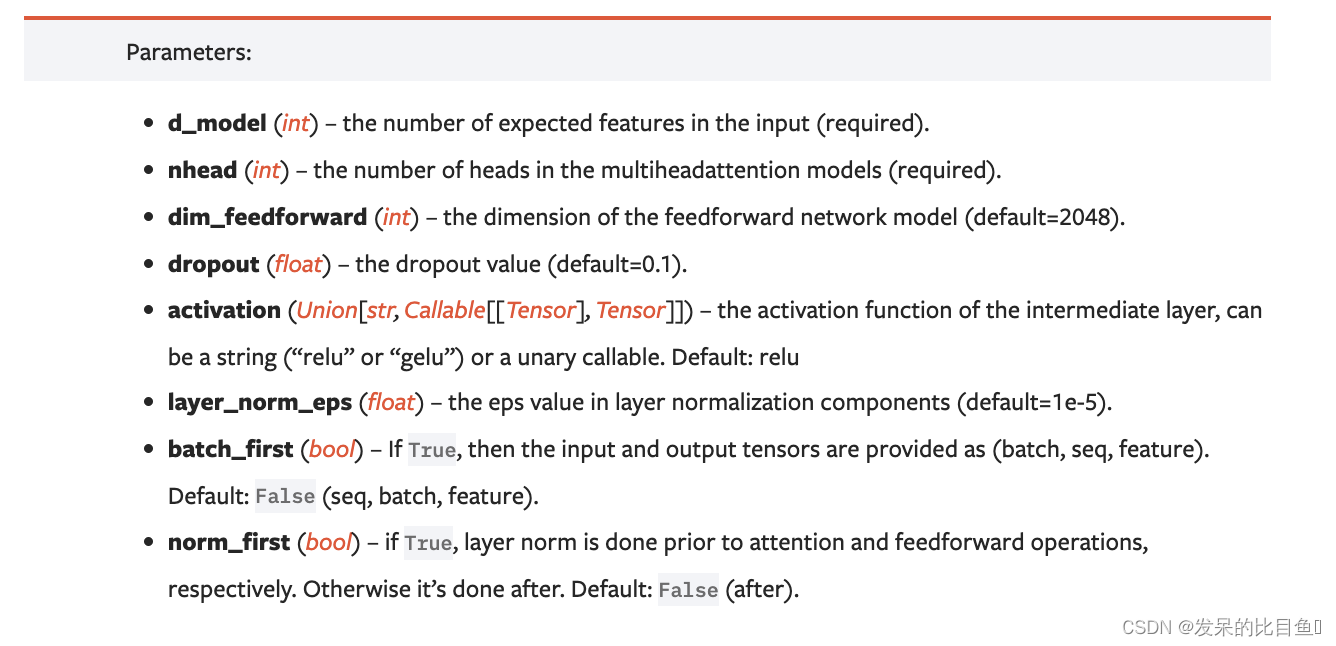

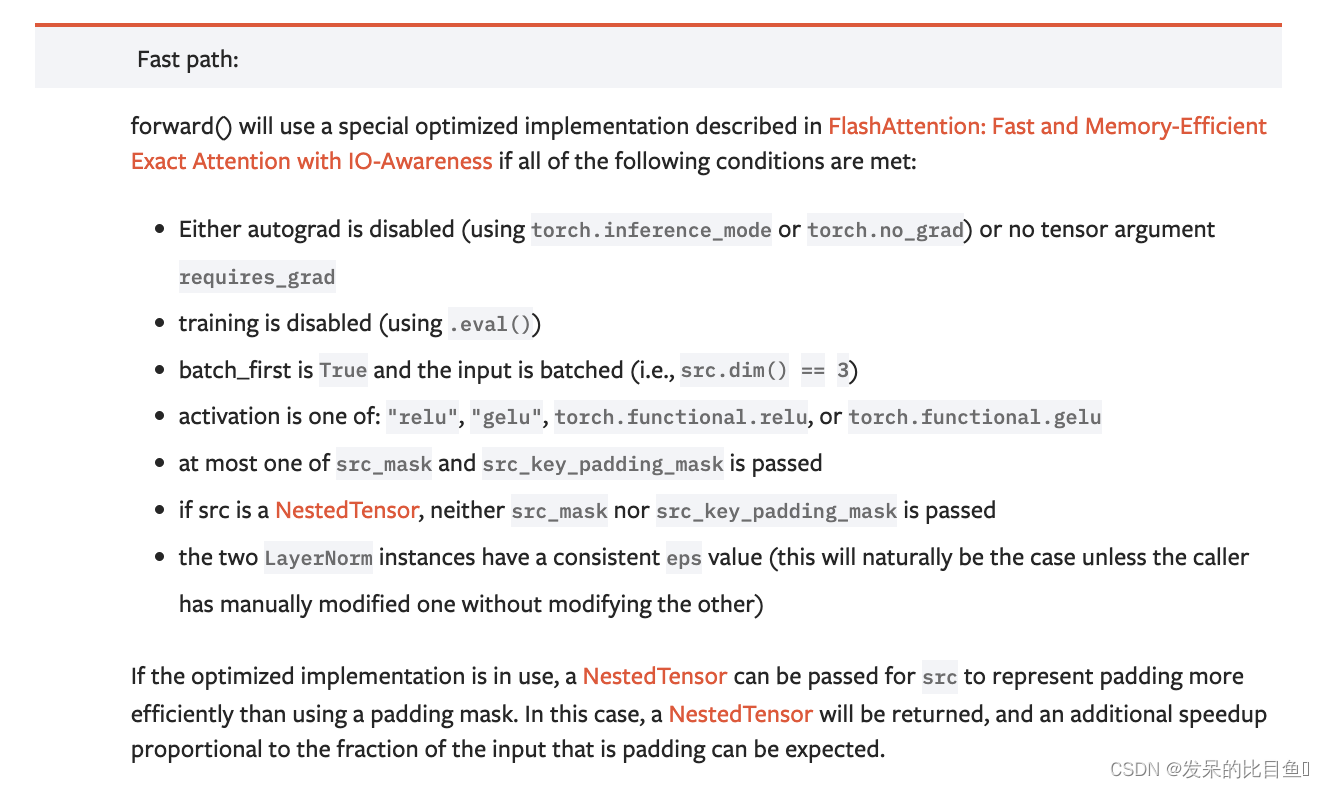

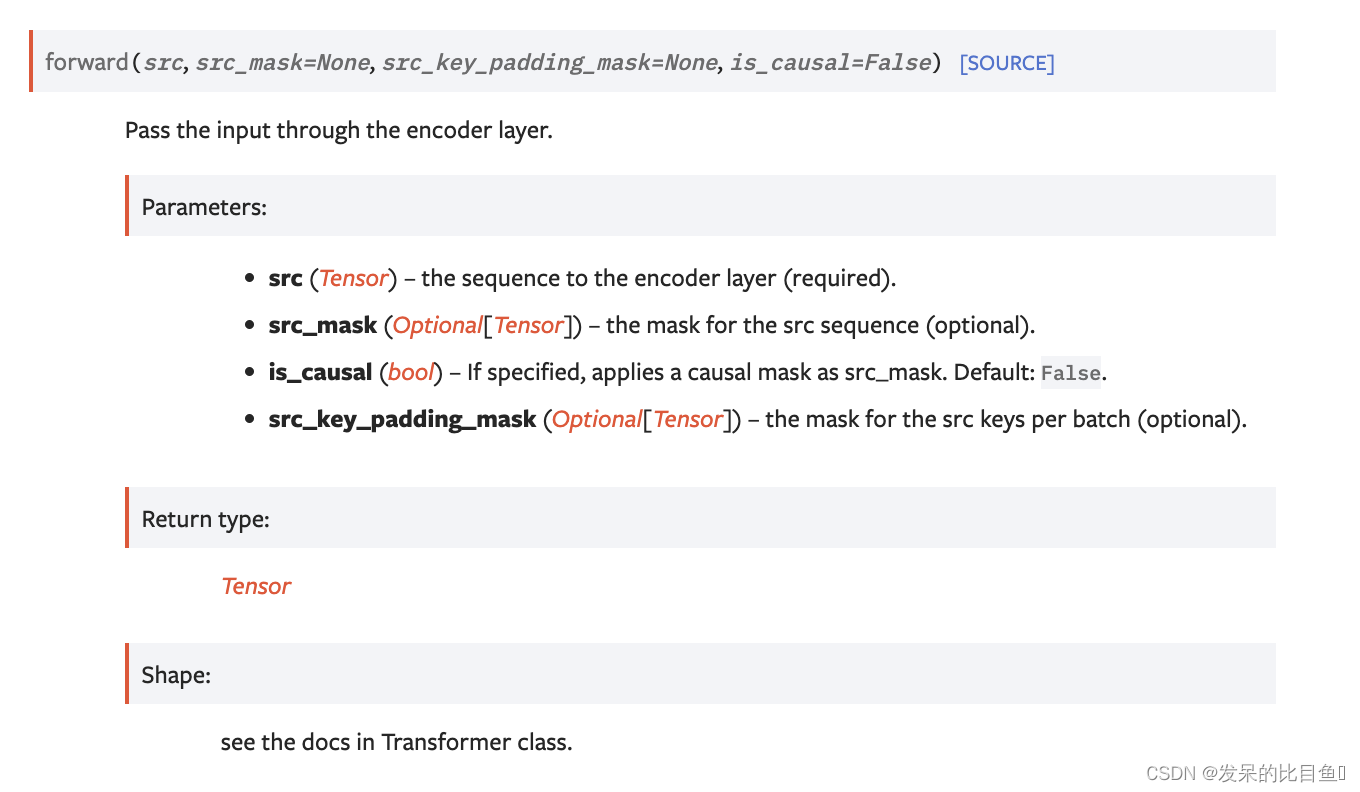

nn.TransformerEncoderLayer

TransformerEncoderLayer由自注意网络和前馈网络组成。这个标准编码器层是基于“Attention Is All You Need”,用户可以在应用过程中以不同的方式修改或实现。

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8, batch_first=True)

>>> src = torch.rand(32, 10, 512)

>>> out = encoder_layer(src)

>>> encoder_layer = nn.TransformerEncoderLayer(d_model=512, nhead=8, batch_first=True)

>>> src = torch.rand(32, 10, 512)

>>> out = encoder_layer(src)

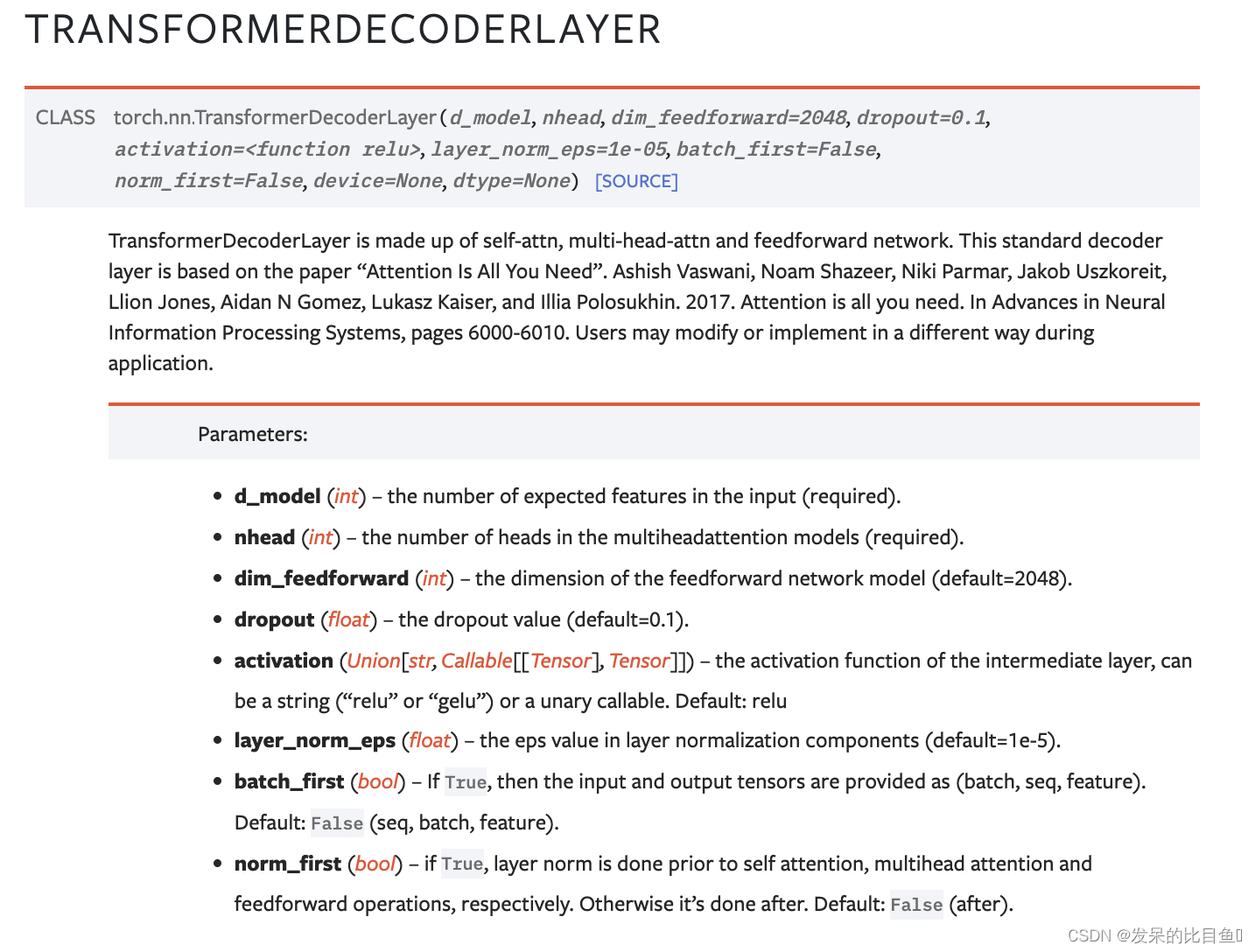

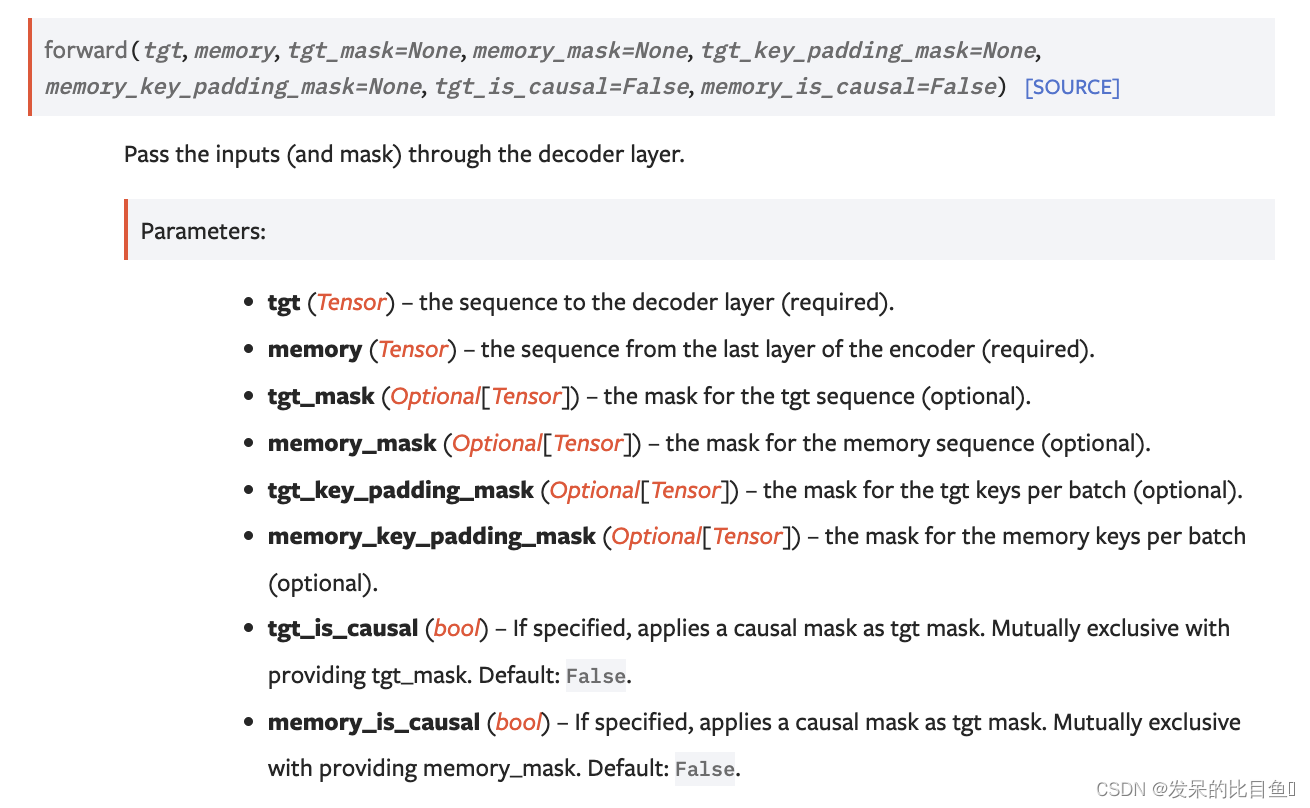

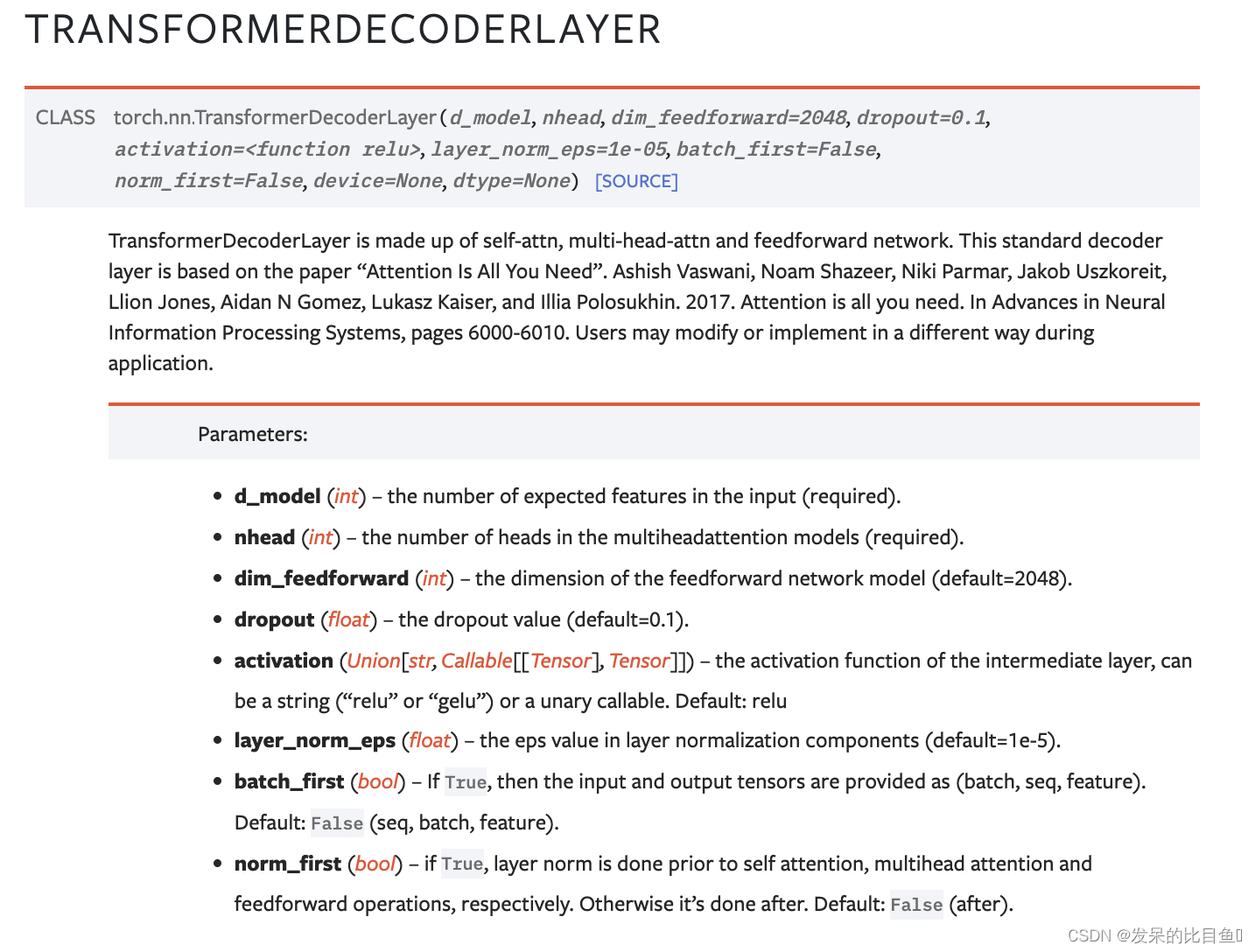

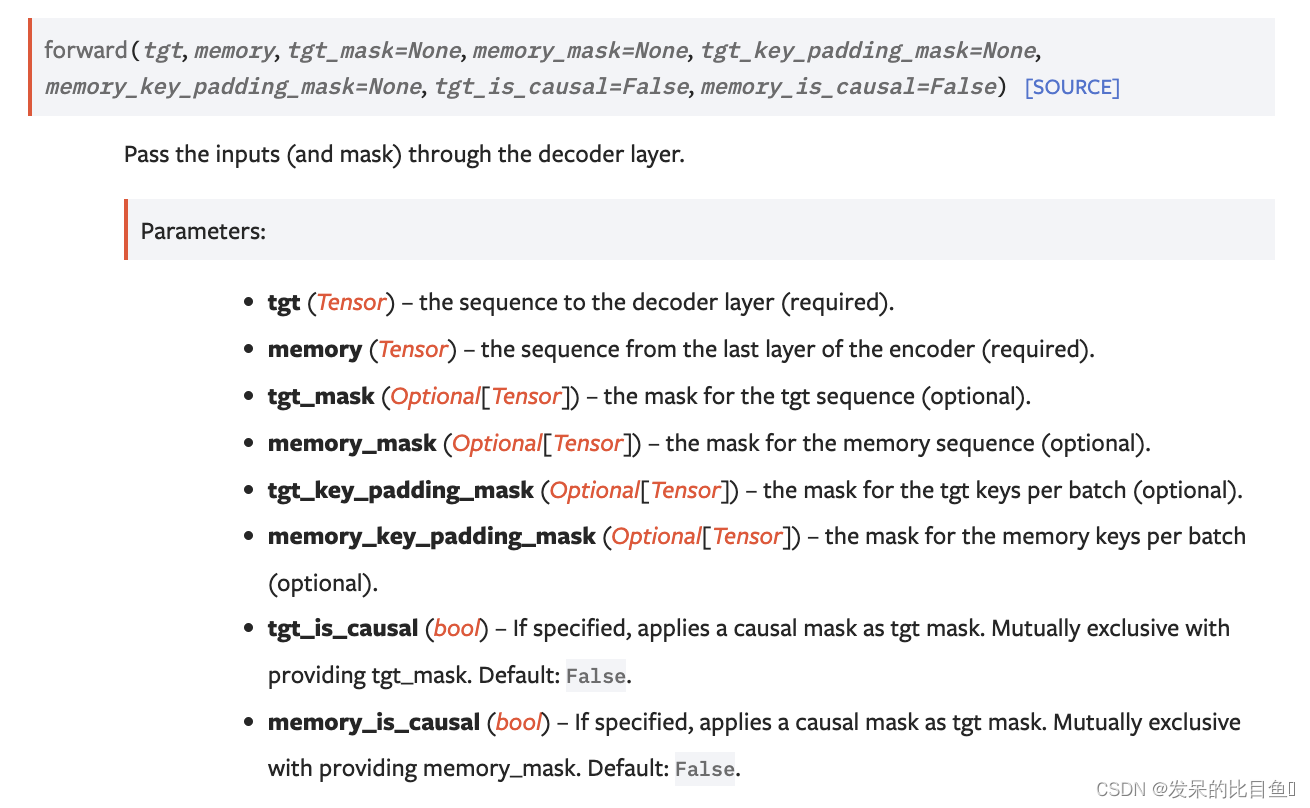

nn.TransformerDecoderLayer

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8)

>>> memory = torch.rand(10, 32, 512)

>>> tgt = torch.rand(20, 32, 512)

>>> out = decoder_layer(tgt, memory)

>>> decoder_layer = nn.TransformerDecoderLayer(d_model=512, nhead=8, batch_first=True)

>>> memory = torch.rand(32, 10, 512)

>>> tgt = torch.rand(32, 20, 512)

>>> out = decoder_layer(tgt, memory)

|